",

"service_tier": "flex"

}'

```

#### API request timeouts

Due to slower processing speeds with Flex processing, request timeouts are more likely. Here are some considerations for handling timeouts:

- **Default timeout**: The default timeout is **10 minutes** when making API requests with an official OpenAI SDK. You may need to increase this timeout for lengthy prompts or complex tasks.

- **Configuring timeouts**: Each SDK will provide a parameter to increase this timeout. In the Python and JavaScript SDKs, this is `timeout` as shown in the code samples above.

- **Automatic retries**: The OpenAI SDKs automatically retry requests that result in a `408 Request Timeout` error code twice before throwing an exception.

## Resource unavailable errors

Flex processing may sometimes lack sufficient resources to handle your requests, resulting in a `429 Resource Unavailable` error code. **You will not be charged when this occurs.**

Consider implementing these strategies for handling resource unavailable errors:

- **Retry requests with exponential backoff**: Implementing exponential backoff is suitable for workloads that can tolerate delays and aims to minimize costs, as your request can eventually complete when more capacity is available. For implementation details, see [this cookbook](https://developers.openai.com/cookbook/examples/how_to_handle_rate_limits?utm_source=chatgpt.com#retrying-with-exponential-backoff).

- **Retry requests with standard processing**: When receiving a resource unavailable error, implement a retry strategy with standard processing if occasional higher costs are worth ensuring successful completion for your use case. To do so, set `service_tier` to `auto` in the retried request, or remove the `service_tier` parameter to use the default mode for the project.

---

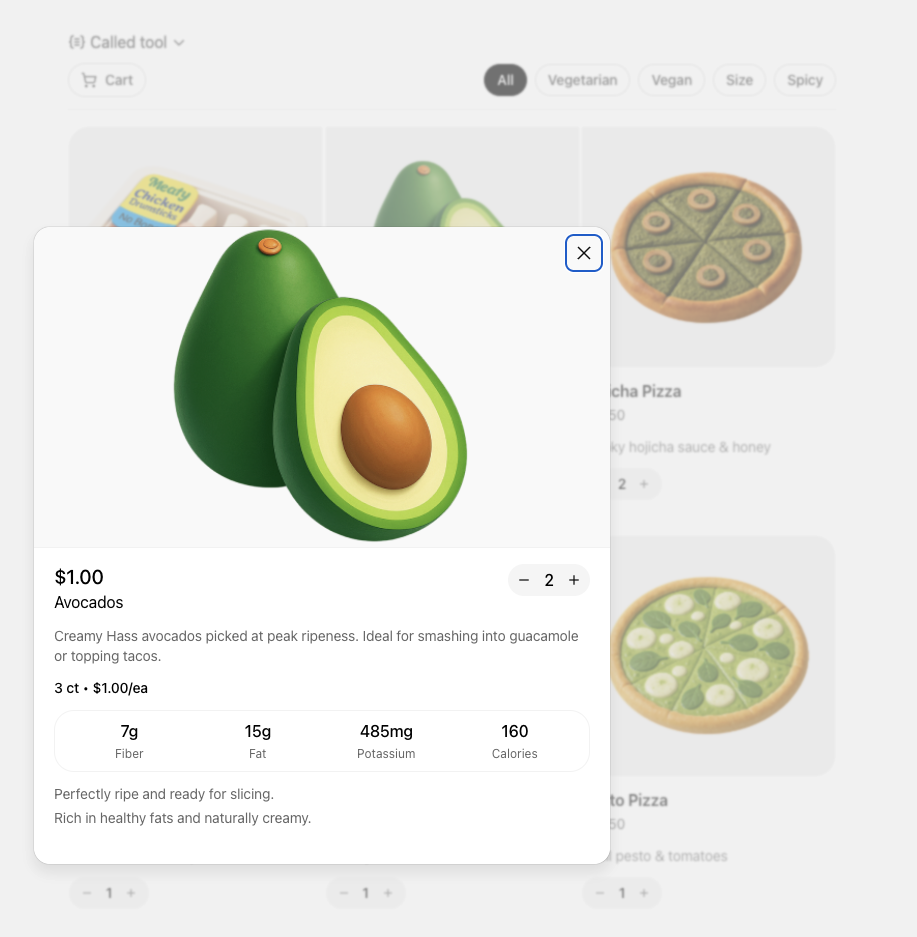

# Function calling

**Function calling** (also known as **tool calling**) provides a powerful and flexible way for OpenAI models to interface with external systems and access data outside their training data. This guide shows how you can connect a model to data and actions provided by your application. We'll show how to use function tools (defined by a JSON schema) and custom tools which work with free form text inputs and outputs.

If your application has many functions or large schemas, you can pair function calling with [tool search](https://developers.openai.com/api/docs/guides/tools-tool-search) to defer rarely used tools and load them only when the model needs them. Only `gpt-5.4` and later models support `tool_search`.

## How it works

Let's begin by understanding a few key terms about tool calling. After we have a shared vocabulary for tool calling, we'll show you how it's done with some practical examples.

Tools - functionality we give the model

A **function** or **tool** refers in the abstract to a piece of functionality that we tell the model it has access to. As a model generates a response to a prompt, it may decide that it needs data or functionality provided by a tool to follow the prompt's instructions.

You could give the model access to tools that:

- Get today's weather for a location

- Access account details for a given user ID

- Issue refunds for a lost order

Or anything else you'd like the model to be able to know or do as it responds to a prompt.

When we make an API request to the model with a prompt, we can include a list of tools the model could consider using. For example, if we wanted the model to be able to answer questions about the current weather somewhere in the world, we might give it access to a `get_weather` tool that takes `location` as an argument.

Tool calls - requests from the model to use tools

A **function call** or **tool call** refers to a special kind of response we can get from the model if it examines a prompt, and then determines that in order to follow the instructions in the prompt, it needs to call one of the tools we made available to it.

If the model receives a prompt like "what is the weather in Paris?" in an API request, it could respond to that prompt with a tool call for the `get_weather` tool, with `Paris` as the `location` argument.

Tool call outputs - output we generate for the model

A **function call output** or **tool call output** refers to the response a tool generates using the input from a model's tool call. The tool call output can either be structured JSON or plain text, and it should contain a reference to a specific model tool call (referenced by `call_id` in the examples to come).

To complete our weather example:

- The model has access to a `get_weather` **tool** that takes `location` as an argument.

- In response to a prompt like "what's the weather in Paris?" the model returns a **tool call** that contains a `location` argument with a value of `Paris`

- The **tool call output** might return a JSON object (e.g., `{"temperature": "25", "unit": "C"}`, indicating a current temperature of 25 degrees), [Image contents](https://developers.openai.com/api/docs/guides/images), or [File contents](https://developers.openai.com/api/docs/guides/file-inputs).

We then send all of the tool definition, the original prompt, the model's tool call, and the tool call output back to the model to finally receive a text response like:

```

The weather in Paris today is 25C.

```

Functions versus tools

- A function is a specific kind of tool, defined by a JSON schema. A function definition allows the model to pass data to your application, where your code can access data or take actions suggested by the model.

- In addition to function tools, there are custom tools (described in this guide) that work with free text inputs and outputs.

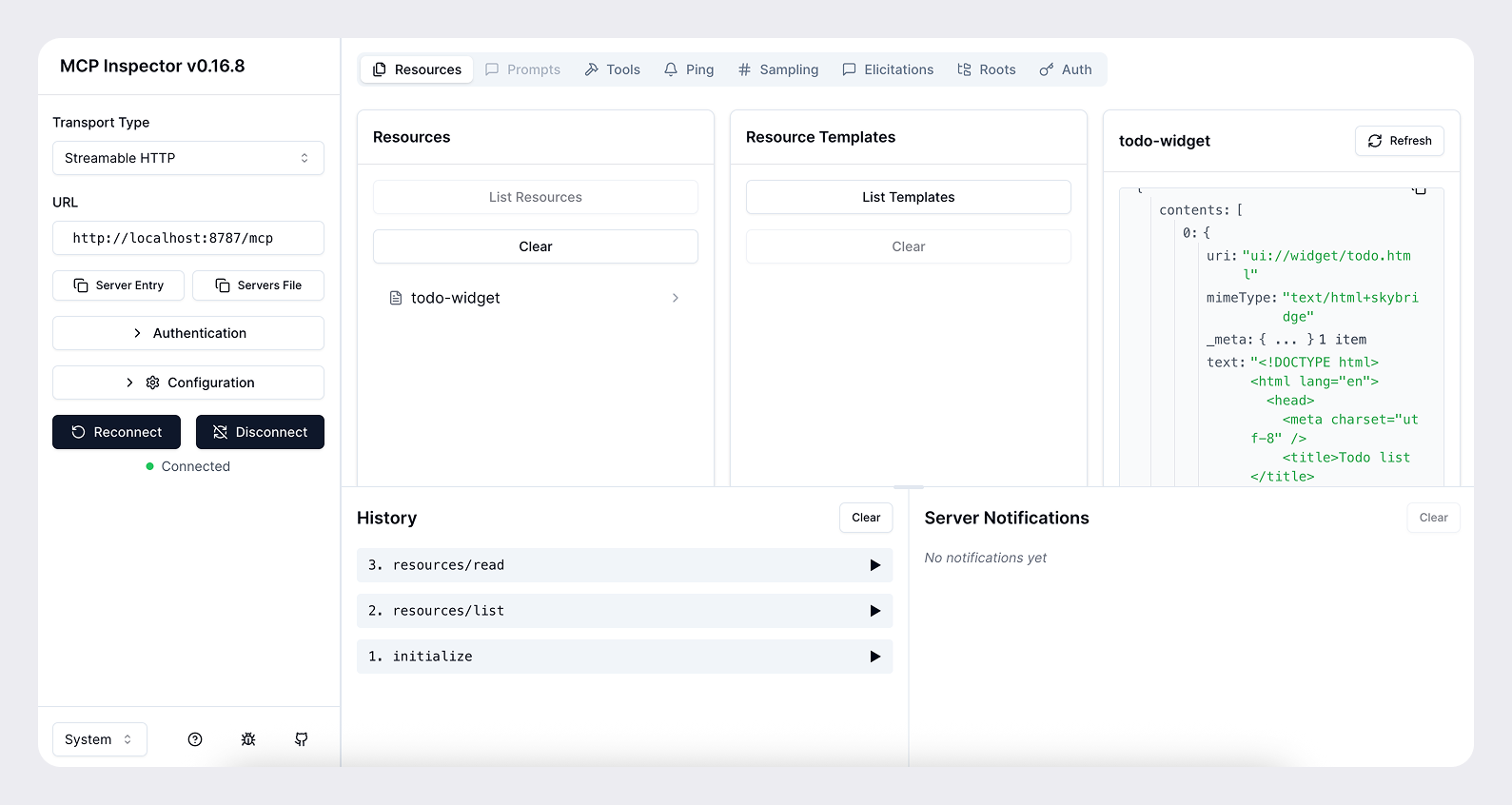

- There are also [built-in tools](https://developers.openai.com/api/docs/guides/tools) that are part of the OpenAI platform. These tools enable the model to [search the web](https://developers.openai.com/api/docs/guides/tools-web-search), [execute code](https://developers.openai.com/api/docs/guides/tools-code-interpreter), access the functionality of an [MCP server](https://developers.openai.com/api/docs/guides/tools-remote-mcp), and more.

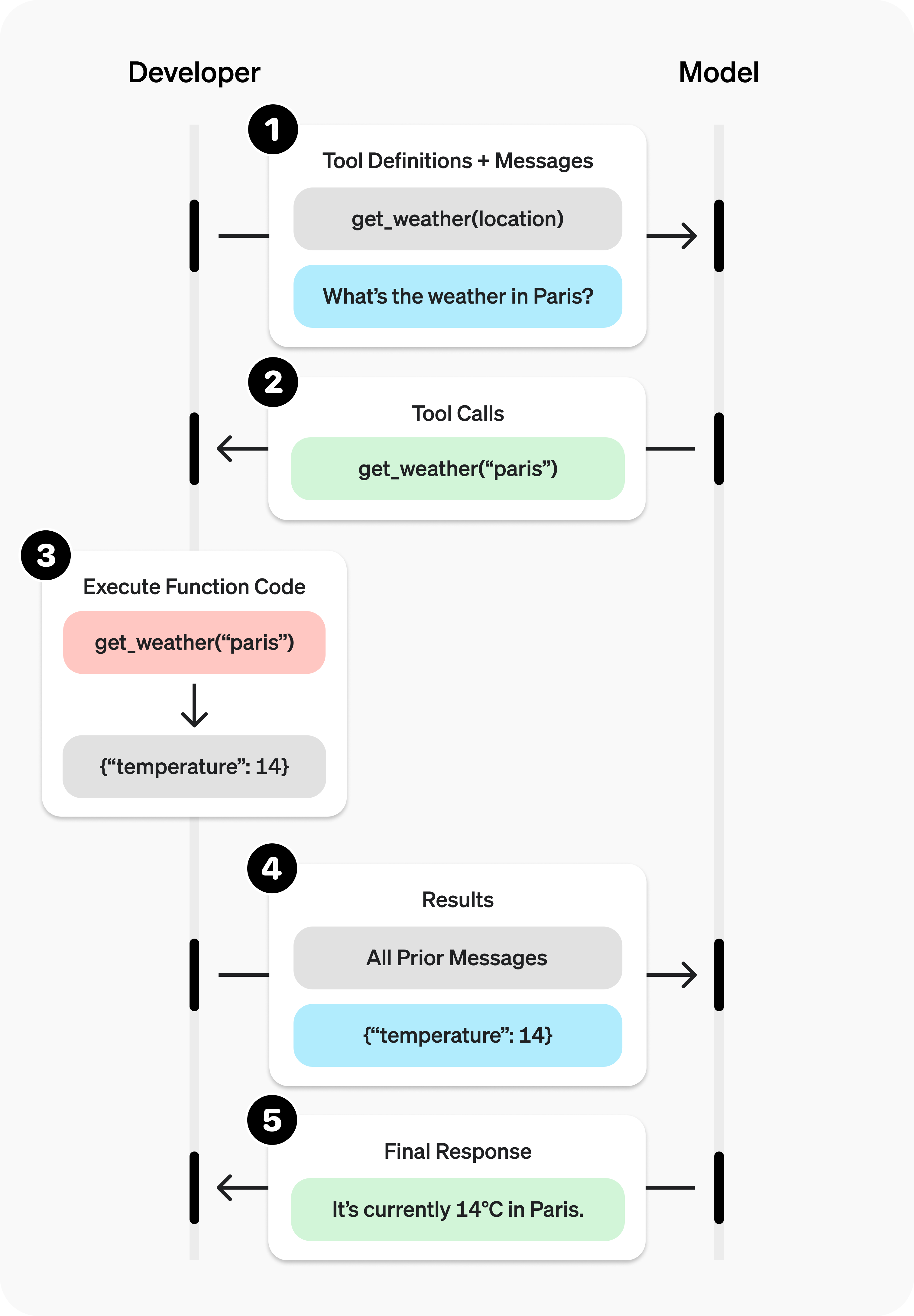

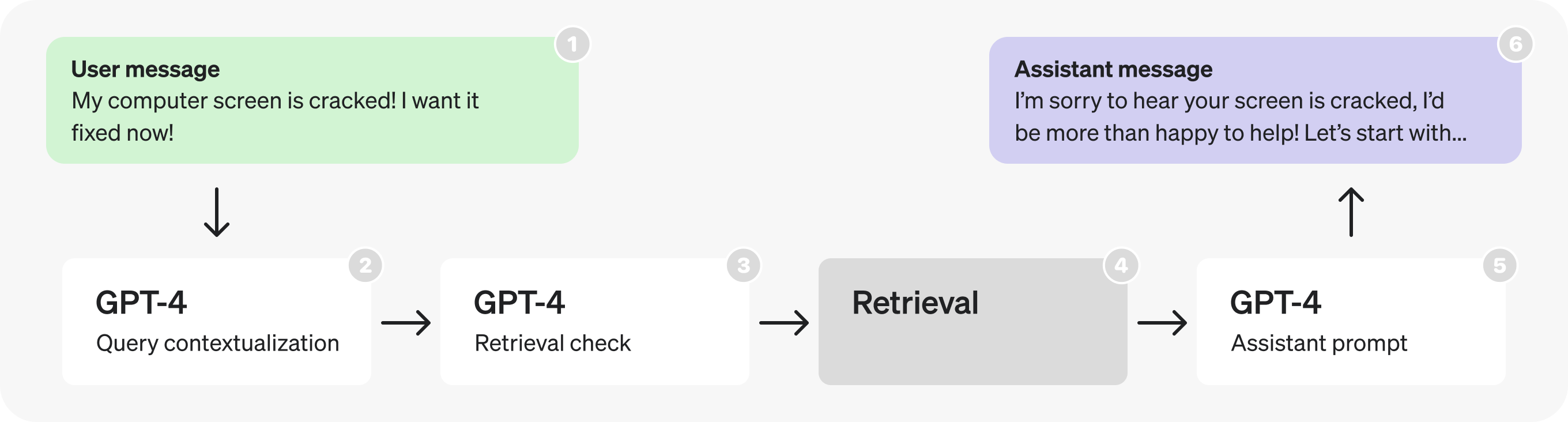

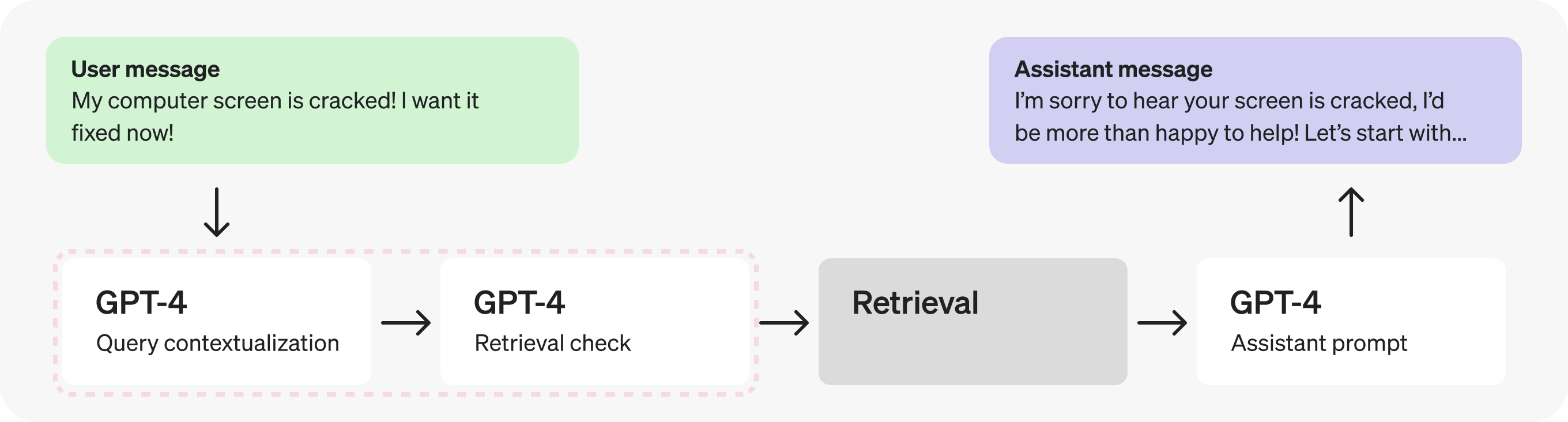

### The tool calling flow

Tool calling is a multi-step conversation between your application and a model via the OpenAI API. The tool calling flow has five high level steps:

1. Make a request to the model with tools it could call

1. Receive a tool call from the model

1. Execute code on the application side with input from the tool call

1. Make a second request to the model with the tool output

1. Receive a final response from the model (or more tool calls)

## Function tool example

Let's look at an end-to-end tool calling flow for a `get_horoscope` function that gets a daily horoscope for an astrological sign.

Note that for reasoning models like GPT-5 or o4-mini, any reasoning items

returned in model responses with tool calls must also be passed back with tool

call outputs.

## Defining functions

Functions are usually declared in the `tools` parameter of each API request. With [tool search](https://developers.openai.com/api/docs/guides/tools-tool-search), your application can also load deferred functions later in the interaction. Either way, each callable function uses the same schema shape. A function definition has the following properties:

| Field | Description |

| ------------- | ------------------------------------------------------------------------------- |

| `type` | This should always be `function` |

| `name` | The function's name (e.g. `get_weather`) |

| `description` | Details on when and how to use the function |

| `parameters` | [JSON schema](https://json-schema.org/) defining the function's input arguments |

| `strict` | Whether to enforce strict mode for the function call |

Here is an example function definition for a `get_weather` function

```json

{

"type": "function",

"name": "get_weather",

"description": "Retrieves current weather for the given location.",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "City and country e.g. Bogotá, Colombia"

},

"units": {

"type": "string",

"enum": ["celsius", "fahrenheit"],

"description": "Units the temperature will be returned in."

}

},

"required": ["location", "units"],

"additionalProperties": false

},

"strict": true

}

```

Because the `parameters` are defined by a [JSON schema](https://json-schema.org/), you can leverage many of its rich features like property types, enums, descriptions, nested objects, and, recursive objects.

## Defining namespaces

Use namespaces to group related tools by domain, such as `crm`, `billing`, or `shipping`. Namespaces help organize similar tools and are especially useful when the model must choose between tools that serve different systems or purposes, such as one search tool for your CRM and another for your support ticketing system.

```json

{

"type": "namespace",

"name": "crm",

"description": "CRM tools for customer lookup and order management.",

"tools": [

{

"type": "function",

"name": "get_customer_profile",

"description": "Fetch a customer profile by customer ID.",

"parameters": {

"type": "object",

"properties": {

"customer_id": { "type": "string" }

},

"required": ["customer_id"],

"additionalProperties": false

}

},

{

"type": "function",

"name": "list_open_orders",

"description": "List open orders for a customer ID.",

"defer_loading": true,

"parameters": {

"type": "object",

"properties": {

"customer_id": { "type": "string" }

},

"required": ["customer_id"],

"additionalProperties": false

}

}

]

}

```

## Tool search

If you need to give the model access to a large ecosystem of tools, you can defer loading some or all of those tools with `tool_search`. The `tool_search` tool lets the model search for relevant tools, add them to the model context, and then use them. Only `gpt-5.4` and later models support it. Read the [tool search guide](https://developers.openai.com/api/docs/guides/tools-tool-search) to learn more.

### Best practices for defining functions

1. **Write clear and detailed function names, parameter descriptions, and instructions.**

- **Explicitly describe the purpose of the function and each parameter** (and its format), and what the output represents.

- **Use the system prompt to describe when (and when not) to use each function.** Generally, tell the model _exactly_ what to do.

- **Include examples and edge cases**, especially to rectify any recurring failures. (**Note:** Adding examples may hurt performance for [reasoning models](https://developers.openai.com/api/docs/guides/reasoning).)

- **For deferred tools, put detailed guidance in the function description and keep the namespace description concise.** The namespace helps the model choose what to load; the function description helps it use the loaded tool correctly.

1. **Apply software engineering best practices.**

- **Make the functions obvious and intuitive**. ([principle of least surprise](https://en.wikipedia.org/wiki/Principle_of_least_astonishment))

- **Use enums** and object structure to make invalid states unrepresentable. (e.g. `toggle_light(on: bool, off: bool)` allows for invalid calls)

- **Pass the intern test.** Can an intern/human correctly use the function given nothing but what you gave the model? (If not, what questions do they ask you? Add the answers to the prompt.)

1. **Offload the burden from the model and use code where possible.**

- **Don't make the model fill arguments you already know.** For example, if you already have an `order_id` based on a previous menu, don't have an `order_id` param – instead, have no params `submit_refund()` and pass the `order_id` with code.

- **Combine functions that are always called in sequence.** For example, if you always call `mark_location()` after `query_location()`, just move the marking logic into the query function call.

1. **Keep the number of initially available functions small for higher accuracy.**

- **Evaluate your performance** with different numbers of functions.

- **Aim for fewer than 20 functions available at the start of a turn** at any one time, though this is just a soft suggestion.

- **Use tool search** to defer large or infrequently used parts of your tool surface instead of exposing everything up front.

1. **Leverage OpenAI resources.**

- **Generate and iterate on function schemas** in the [Playground](https://platform.openai.com/playground).

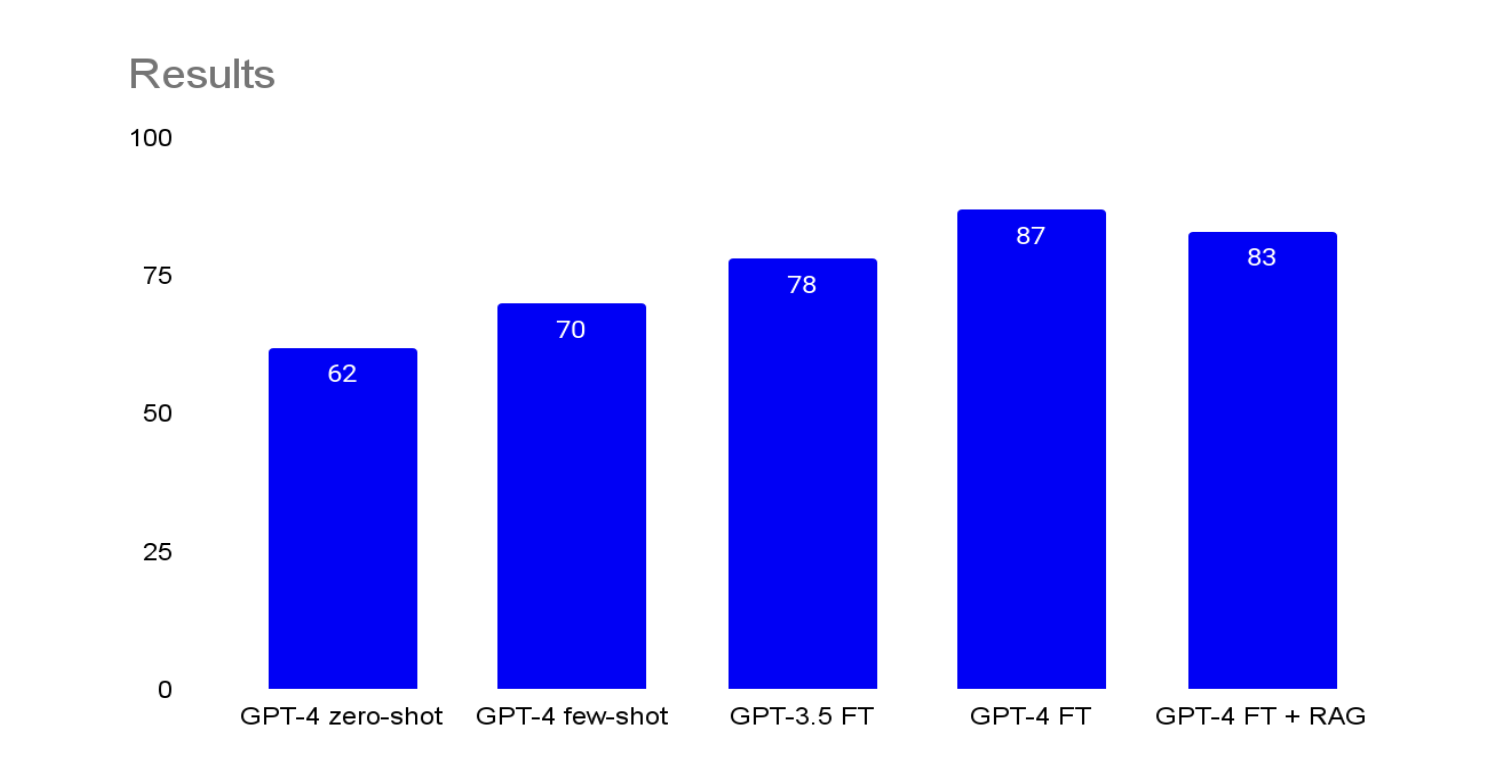

- **Consider [fine-tuning](https://developers.openai.com/api/docs/guides/fine-tuning) to increase function calling accuracy** for large numbers of functions or difficult tasks. ([cookbook](https://developers.openai.com/cookbook/examples/fine_tuning_for_function_calling))

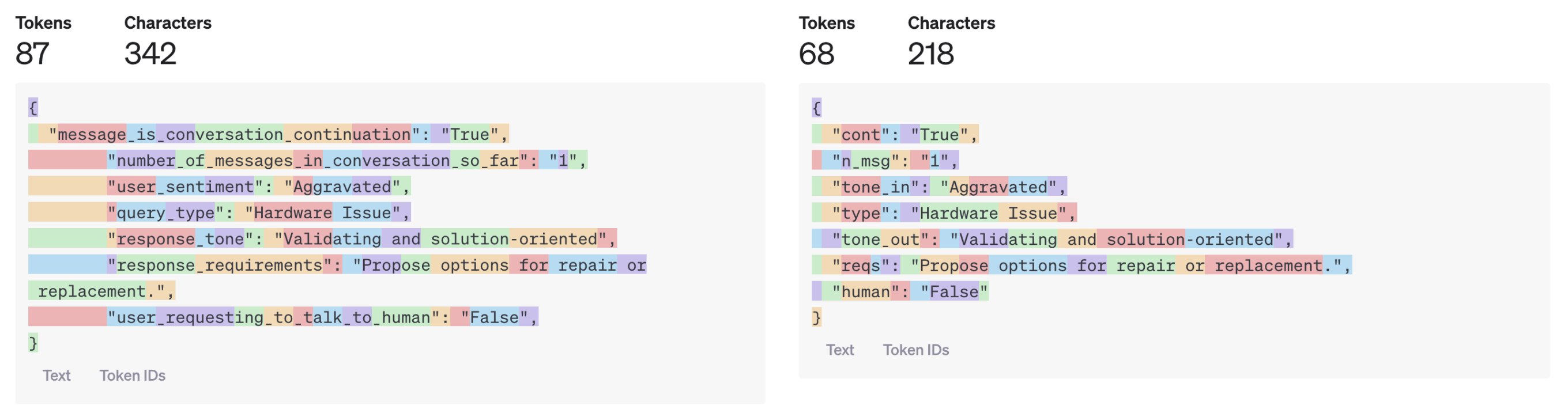

### Token Usage

Under the hood, functions are injected into the system message in a syntax the model has been trained on. This means callable function definitions count against the model's context limit and are billed as input tokens. If you run into token limits, we suggest limiting the number of functions loaded up front, shortening descriptions where possible, or using [tool search](https://developers.openai.com/api/docs/guides/tools-tool-search) so deferred tools are loaded only when needed.

It is also possible to use [fine-tuning](https://developers.openai.com/api/docs/guides/fine-tuning#fine-tuning-examples) to reduce the number of tokens used if you have many functions defined in your tools specification.

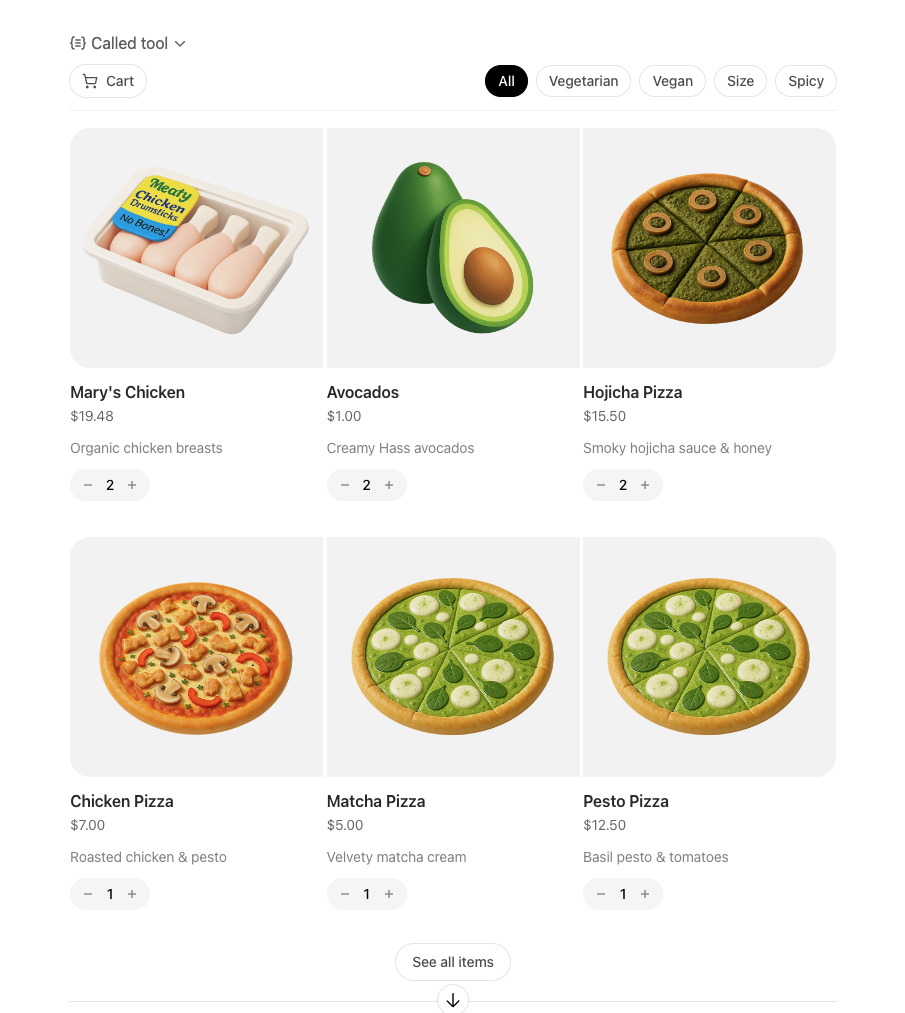

## Handling function calls

When the model calls a function, you must execute it and return the result. Since model responses can include zero, one, or multiple calls, it is best practice to assume there are several.

The response `output` array contains an entry with the `type` having a value of `function_call`. Each entry with a `call_id` (used later to submit the function result), `name`, and JSON-encoded `arguments`.

If you are using [tool search](https://developers.openai.com/api/docs/guides/tools-tool-search), you may also see `tool_search_call` and `tool_search_output` items before a `function_call`. Once the function is loaded, handle the function call in the same way shown here.

In the example above, we have a hypothetical `call_function` to route each call. Here’s a possible implementation:

### Formatting results

The result you pass in the `function_call_output` message should typically be a string, where the format is up to you (JSON, error codes, plain text, etc.). The model will interpret that string as needed.

For functions that return images or files, you can pass an [array of image or file objects](https://developers.openai.com/api/docs/api-reference/responses/create#responses_create-input-input_item_list-item-function_tool_call_output-output) instead of a string.

If your function has no return value (e.g. `send_email`), simply return a string that indicates success or failure. (e.g. `"success"`)

### Incorporating results into response

After appending the results to your `input`, you can send them back to the model to get a final response.

## Additional configurations

### Tool choice

By default the model will determine when and how many tools to use. You can force specific behavior with the `tool_choice` parameter.

1. **Auto:** (_Default_) Call zero, one, or multiple functions. `tool_choice: "auto"`

1. **Required:** Call one or more functions.

`tool_choice: "required"`

1. **Forced Function:** Call exactly one specific function.

`tool_choice: {"type": "function", "name": "get_weather"}`

1. **Allowed tools:** Restrict the tool calls the model can make to a subset of

the tools available to the model.

**When to use allowed_tools**

You might want to configure an `allowed_tools` list in case you want to make only

a subset of tools available across model requests, but not modify the list of tools you pass in, so you can maximize savings from [prompt caching](https://developers.openai.com/api/docs/guides/prompt-caching).

```json

"tool_choice": {

"type": "allowed_tools",

"mode": "auto",

"tools": [

{ "type": "function", "name": "get_weather" },

{ "type": "function", "name": "search_docs" }

]

}

}

```

You can also set `tool_choice` to `"none"` to imitate the behavior of passing no functions.

When you use tool search, `tool_choice` still applies to the tools that are currently callable in the turn. This is most useful after you load a subset of tools and want to constrain the model to that subset.

### Parallel function calling

Parallel function calling is not possible when using [built-in

tools](https://developers.openai.com/api/docs/guides/tools).

The model may choose to call multiple functions in a single turn. You can prevent this by setting `parallel_tool_calls` to `false`, which ensures exactly zero or one tool is called.

**Note:** Currently, if you are using a fine tuned model and the model calls multiple functions in one turn then [strict mode](#strict-mode) will be disabled for those calls.

**Note for `gpt-4.1-nano-2025-04-14`:** This snapshot of `gpt-4.1-nano` can sometimes include multiple tools calls for the same tool if parallel tool calls are enabled. It is recommended to disable this feature when using this nano snapshot.

### Strict mode

Setting `strict` to `true` will ensure function calls reliably adhere to the function schema, instead of being best effort. We recommend always enabling strict mode.

Under the hood, strict mode works by leveraging our [structured outputs](https://developers.openai.com/api/docs/guides/structured-outputs) feature and therefore introduces a couple requirements:

1. `additionalProperties` must be set to `false` for each object in the `parameters`.

1. All fields in `properties` must be marked as `required`.

You can denote optional fields by adding `null` as a `type` option (see example below).

If you send `strict: true` and your schema does not meet the requirements above,

the request will be rejected with details about the missing constraints. If you

omit `strict`, the default depends on the API: Responses requests will

normalize your schema into strict mode (for example, by setting

`additionalProperties: false` and marking all fields as required), which can

make previously optional fields mandatory, while Chat Completions requests

remain non-strict by default. To opt out of strict mode in Responses and keep

non-strict, best-effort function calling, explicitly set `strict: false`.

All schemas generated in the

[playground](https://platform.openai.com/playground) have strict mode enabled.

While we recommend you enable strict mode, it has a few limitations:

1. Some features of JSON schema are not supported. (See [supported schemas](https://developers.openai.com/api/docs/guides/structured-outputs?context=with_parse#supported-schemas).)

Specifically for fine tuned models:

1. Schemas undergo additional processing on the first request (and are then cached). If your schemas vary from request to request, this may result in higher latencies.

2. Schemas are cached for performance, and are not eligible for [zero data retention](https://developers.openai.com/api/docs/models#how-we-use-your-data).

## Streaming

Streaming can be used to surface progress by showing which function is called as the model fills its arguments, and even displaying the arguments in real time.

Streaming function calls is very similar to streaming regular responses: you set `stream` to `true` and get different `event` objects.

Instead of aggregating chunks into a single `content` string, however, you're aggregating chunks into an encoded `arguments` JSON object.

When the model calls one or more functions an event of type `response.output_item.added` will be emitted for each function call that contains the following fields:

| Field | Description |

| -------------- | ------------------------------------------------------------------------------------------------------------ |

| `response_id` | The id of the response that the function call belongs to |

| `output_index` | The index of the output item in the response. This represents the individual function calls in the response. |

| `item` | The in-progress function call item that includes a `name`, `arguments` and `id` field |

Afterwards you will receive a series of events of type `response.function_call_arguments.delta` which will contain the `delta` of the `arguments` field. These events contain the following fields:

| Field | Description |

| -------------- | ------------------------------------------------------------------------------------------------------------ |

| `response_id` | The id of the response that the function call belongs to |

| `item_id` | The id of the function call item that the delta belongs to |

| `output_index` | The index of the output item in the response. This represents the individual function calls in the response. |

| `delta` | The delta of the `arguments` field. |

Below is a code snippet demonstrating how to aggregate the `delta`s into a final `tool_call` object.

When the model has finished calling the functions an event of type `response.function_call_arguments.done` will be emitted. This event contains the entire function call including the following fields:

| Field | Description |

| -------------- | ------------------------------------------------------------------------------------------------------------ |

| `response_id` | The id of the response that the function call belongs to |

| `output_index` | The index of the output item in the response. This represents the individual function calls in the response. |

| `item` | The function call item that includes a `name`, `arguments` and `id` field. |

## Custom tools

Custom tools work in much the same way as JSON schema-driven function tools. But rather than providing the model explicit instructions on what input your tool requires, the model can pass an arbitrary string back to your tool as input. This is useful to avoid unnecessarily wrapping a response in JSON, or to apply a custom grammar to the response (more on this below).

The following code sample shows creating a custom tool that expects to receive a string of text containing Python code as a response.

Just as before, the `output` array will contain a tool call generated by the model. Except this time, the tool call input is given as plain text.

```json

[

{

"id": "rs_6890e972fa7c819ca8bc561526b989170694874912ae0ea6",

"type": "reasoning",

"content": [],

"summary": []

},

{

"id": "ctc_6890e975e86c819c9338825b3e1994810694874912ae0ea6",

"type": "custom_tool_call",

"status": "completed",

"call_id": "call_aGiFQkRWSWAIsMQ19fKqxUgb",

"input": "print(\"hello world\")",

"name": "code_exec"

}

]

```

### Context-free grammars

A [context-free grammar](https://en.wikipedia.org/wiki/Context-free_grammar) (CFG) is a set of rules that define how to produce valid text in a given format. For custom tools, you can provide a CFG that will constrain the model's text input for a custom tool.

You can provide a custom CFG using the `grammar` parameter when configuring a custom tool. Currently, we support two CFG syntaxes when defining grammars: `lark` and `regex`.

#### Lark CFG

The output from the tool should then conform to the Lark CFG that you defined:

```json

[

{

"id": "rs_6890ed2b6374819dbbff5353e6664ef103f4db9848be4829",

"type": "reasoning",

"content": [],

"summary": []

},

{

"id": "ctc_6890ed2f32e8819daa62bef772b8c15503f4db9848be4829",

"type": "custom_tool_call",

"status": "completed",

"call_id": "call_pmlLjmvG33KJdyVdC4MVdk5N",

"input": "4 + 4",

"name": "math_exp"

}

]

```

Grammars are specified using a variation of [Lark](https://lark-parser.readthedocs.io/en/stable/index.html). Model sampling is constrained using [LLGuidance](https://github.com/guidance-ai/llguidance/blob/main/docs/syntax.md). Some features of Lark are not supported:

- Lookarounds in lexer regexes

- Lazy modifiers (`*?`, `+?`, `??`) in lexer regexes

- Priorities of terminals

- Templates

- Imports (other than built-in `%import` common)

- `%declare`s

We recommend using the [Lark IDE](https://www.lark-parser.org/ide/) to experiment with custom grammars.

### Keep grammars simple

Try to make your grammar as simple as possible. The OpenAI API may return an error if the grammar is too complex, so you should ensure that your desired grammar is compatible before using it in the API.

Lark grammars can be tricky to perfect. While simple grammars perform most reliably, complex grammars often require iteration on the grammar definition itself, the prompt, and the tool description to ensure that the model does not go out of distribution.

### Correct versus incorrect patterns

Correct (single, bounded terminal):

```

start: SENTENCE

SENTENCE: /[A-Za-z, ]*(the hero|a dragon|an old man|the princess)[A-Za-z, ]*(fought|saved|found|lost)[A-Za-z, ]*(a treasure|the kingdom|a secret|his way)[A-Za-z, ]*\./

```

Do NOT do this (splitting across rules/terminals). This attempts to let rules partition free text between terminals. The lexer will greedily match the free-text pieces and you'll lose control:

```

start: sentence

sentence: /[A-Za-z, ]+/ subject /[A-Za-z, ]+/ verb /[A-Za-z, ]+/ object /[A-Za-z, ]+/

```

Lowercase rules don't influence how terminals are cut from the input—only terminal definitions do. When you need “free text between anchors,” make it one giant regex terminal so the lexer matches it exactly once with the structure you intend.

### Terminals versus rules

Lark uses terminals for lexer tokens (by convention, `UPPERCASE`) and rules for parser productions (by convention, `lowercase`). The most practical way to stay within the supported subset and avoid surprises is to keep your grammar simple and explicit, and to use terminals and rules with a clear separation of concerns.

The regex syntax used by terminals is the [Rust regex crate syntax](https://docs.rs/regex/latest/regex/#syntax), not Python's `re` [module](https://docs.python.org/3/library/re.html).

### Key ideas and best practices

**Lexer runs before the parser**

Terminals are matched by the lexer (greedily / longest match wins) before any CFG rule logic is applied. If you try to "shape" a terminal by splitting it across several rules, the lexer cannot be guided by those rules—only by terminal regexes.

**Prefer one terminal when you're carving text out of freeform spans**

If you need to recognize a pattern embedded in arbitrary text (e.g., natural language with “anything” between anchors), express that as a single terminal. Do not try to interleave free‑text terminals with parser rules; the greedy lexer will not respect your intended boundaries and it is highly likely the model will go out of distribution.

**Use rules to compose discrete tokens**

Rules are ideal when you're combining clearly delimited terminals (numbers, keywords, punctuation) into larger structures. They're not the right tool for constraining "the stuff in between" two terminals.

**Keep terminals simple, bounded, and self-contained**

Favor explicit character classes and bounded quantifiers (`{0,10}`, not unbounded `*` everywhere). If you need "any text up to a period", prefer something like `/[^.\n]{0,10}*\./` rather than `/.+\./` to avoid runaway growth.

**Use rules to combine tokens, not to steer regex internals**

Good rule usage example:

```

start: expr

NUMBER: /[0-9]+/

PLUS: "+"

MINUS: "-"

expr: term (("+"|"-") term)*

term: NUMBER

```

**Treat whitespace explicitly**

Don't rely on open-ended `%ignore` directives. Using unbounded ignore directives may cause the grammar to be too complex and/or may cause the model to go out of distribution. Prefer threading explicit terminals wherever whitespace is allowed.

### Troubleshooting

- If the API rejects the grammar because it is too complex, simplify the rules and terminals and remove unbounded `%ignore`s.

- If custom tools are called with unexpected tokens, confirm terminals aren’t overlapping; check greedy lexer.

- When the model drifts "out‑of‑distribution" (shows up as the model producing excessively long or repetitive outputs, it is syntactically valid but is semantically wrong):

- Tighten the grammar.

- Iterate on the prompt (add few-shot examples) and tool description (explain the grammar and instruct the model to reason and conform to it).

- Experiment with a higher reasoning effort (e.g, bump from medium to high).

#### Regex CFG

The output from the tool should then conform to the Regex CFG that you defined:

```json

[

{

"id": "rs_6894f7a3dd4c81a1823a723a00bfa8710d7962f622d1c260",

"type": "reasoning",

"content": [],

"summary": []

},

{

"id": "ctc_6894f7ad7fb881a1bffa1f377393b1a40d7962f622d1c260",

"type": "custom_tool_call",

"status": "completed",

"call_id": "call_8m4XCnYvEmFlzHgDHbaOCFlK",

"input": "August 7th 2025 at 10AM",

"name": "timestamp"

}

]

```

As with the Lark syntax, regexes use the [Rust regex crate syntax](https://docs.rs/regex/latest/regex/#syntax), not Python's `re` [module](https://docs.python.org/3/library/re.html).

Some features of Regex are not supported:

- Lookarounds

- Lazy modifiers (`*?`, `+?`, `??`)

### Key ideas and best practices

**Pattern must be on one line**

If you need to match a newline in the input, use the escaped sequence `\n`. Do not use verbose/extended mode, which allows patterns to span multiple lines.

**Provide the regex as a plain pattern string**

Don't enclose the pattern in `//`.

---

# Getting started with datasets

Evaluations (often called **evals**) test model outputs to ensure they meet your specified style and content criteria. Writing evals is an essential part of building reliable applications. [Datasets](https://platform.openai.com/evaluation/datasets), a feature of the OpenAI platform, provide a quick way to get started with evals and test prompts.

If you need advanced features such as evaluation against external models, want

to interact with your eval runs via API, or want to run evaluations on a

larger scale, consider using [Evals](https://developers.openai.com/api/docs/guides/evals) instead.

## Create a dataset

First, create a dataset in the dashboard.

1. On the [evaluation page](https://platform.openai.com/evaluation), navigate to the **Datasets** tab.

1. Click the **Create** button in the top right to get started.

1. Add a name for your dataset in the input field. In this guide, we'll name our dataset “Investment memo generation."

1. Add data. To build your dataset from scratch, click **Create** and start adding data through our visual interface. If you already have a saved prompt or a CSV with data, upload it.

We recommend using your dataset as a dynamic space, expanding your set of evaluation data over time. As you identify edge cases or blind spots that need monitoring, add them using the dashboard interface.

### Uploading a CSV

We have a simple CSV containing company names and actual values for their revenue from past quarters.

The columns in your CSV are accessible to both your prompt and graders. For example, our CSV contains input columns (`company`) and ground truth columns (`correct_revenue`, `correct_income`) for our graders to use as reference.

### Using the visual data interface

After opening your dataset, you can manipulate your data in the **Data** tab. Click a cell to edit its contents. Add a row to add more data. You can also delete or duplicate rows in the overflow menu at the right edge of each row.

To save your changes, click **Save** button in the top right.

## Build a prompt

The tabs in the datasets dashboard let multiple prompts interact with the same data.

1. To add a new prompt, click **Add prompt**.

Datasets are designed to be used with your OpenAI [prompts](https://developers.openai.com/api/docs/guides/prompt-engineering#reusable-prompts). If you’ve saved a prompt on the OpenAI platform, you’ll be able to select it from the dropdown and make changes in this interface. To save your prompt changes, click **Save**.

Our prompts use a versioning system so you can safely make updates.

Clicking **Save** creates a new version of your prompt, which you can refer

to or use anywhere in the OpenAI platform.

1. In the prompt panel, use the provided fields and settings to control the inference call:

- Click the slider icon in the top right to control model [`temperature`](https://developers.openai.com/api/docs/api-reference/responses/create#responses-create-temperature) and [`top_p`](https://developers.openai.com/api/docs/api-reference/responses/create#responses-create-top_p).

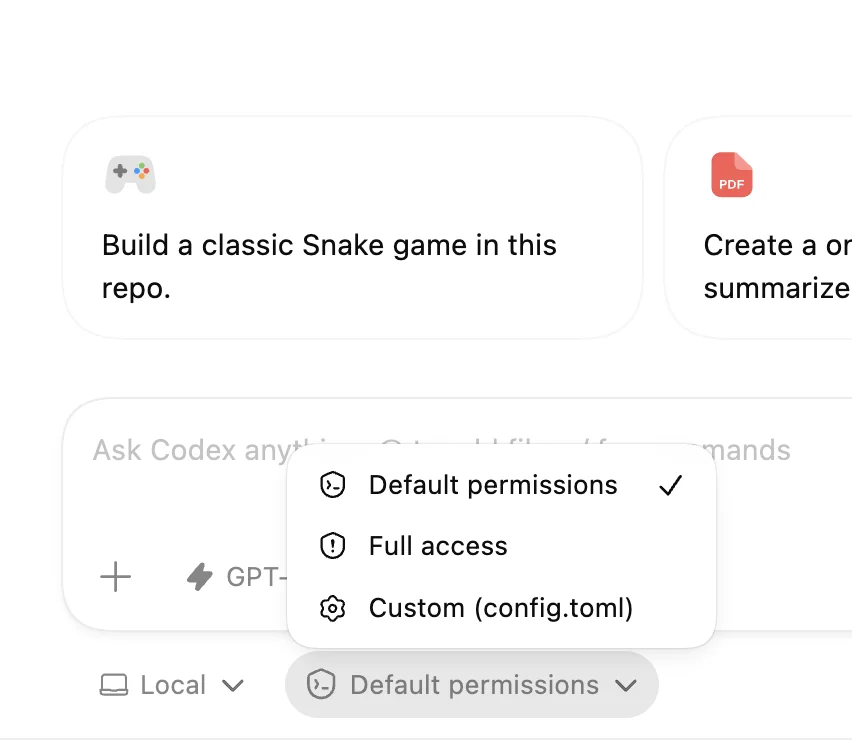

- Add tools to grant your inference call the ability to access the web, use an MCP, or complete other tool-call actions.

- Add variables. The prompt and your [graders](#adding-graders) can both refer to these variables.

- Type your system message directly, or click the pencil icon to have a model help generate a prompt for you, based on basic instructions you provide.

In our example, we'll add the [web search](https://developers.openai.com/api/docs/guides/tools-web-search) tool so our model call can pull financial data from the internet. In our variables list, we'll add `company` so our prompt can reference the company column in our dataset. And for the prompt, we’ll generate one by telling the model to “generate a financial report."

## Generate and annotate outputs

With your data and prompt set up, you’re ready to generate outputs. The model's output gives you a sense of how the model performs your task with the prompt and tools you provided. You'll then annotate the outputs so the model can improve its performance over time.

1. In the top right, click **Generate output**.

You’ll see a new special **output** column in the dataset begin to populate with results. This column contains the results from running your prompt on each row in your dataset.

1. Once your generated outputs are ready, annotate them. Open the annotation view by clicking the **output**, **rating**, or **output_feedback** column.

Annotate as little or as much as you want. Datasets are designed to work with any degree and type of annotation, but the higher quality of information you can provide, the better your results will be.

### What annotation does

Annotations are a key part of evaluating and improving model output. A good annotation:

- Serves as ground truth for desired model behavior, even for highly specific cases—including subjective elements, like style and tone

- Provides information-dense context enabling automatic prompt improvement (via our prompt optimizer)

- Enables diagnosing prompt shortcomings, particularly in subtle or infrequent cases

- Helps ensure that graders are aligned with your intent

You can choose to annotate as little or as much as you want. Datasets are designed to work with any degree and type of annotation, but the higher quality of information you can provide, the better your results will be. Additionally, if you’re not an expert on the contents of your dataset, we recommend that a subject matter expert performs the annotation — this is the most valuable way for their expertise to be incorporated into your optimization process. Explore [our cookbook](https://developers.openai.com/cookbook/examples/evaluation/building_resilient_prompts_using_an_evaluation_flywheel) to learn more about what we have found to be most effective in using evals to improve our prompt resilience.

### Annotation starting points

Here are a few types of annotations you can use to get started:

- A Good/Bad rating, indicating your judgment of the output

- A text critique in the **output_feedback** section

- Custom annotation categories that you added in the **Columns** dropdown in the top right

### Incorporate expert annotations

If you’re not an expert on the contents of your dataset, have a subject matter expert perform the annotation. This is the best way to incorporate expertise into the optimization process. Explore [our cookbook](https://developers.openai.com/cookbook/examples/evaluation/building_resilient_prompts_using_an_evaluation_flywheel) to learn more.

## Add graders

While annotations are the most effective way to incorporate human feedback into your evaluation process, graders let you run evaluations at scale. Graders are automated assessments that can produce a variety of inputs depending on their type.

| **Type** | **Details** | **Use case** |

| ------------------------- | --------------------------------------------------------------------------------- | -------------------------------------------------------------------------------------------------- |

| **String check** | Compares model output to the reference using exact string matching | Check whether your response exactly matches a ground truth column |

| **Text similarity** | Uses embeddings to compute semantic similarity between model output and reference | Check how close your response is to your ground truth reference, when exact matching is not needed |

| **Score model grader** | Uses an LLM to assign a numeric score | Measure subjective properties such as friendliness on a numeric scale |

| **Label model grader** | Uses an LLM to select a categorical label | Categorize your response based on fix labels, such as "concise" or "verbose" |

| **Python code execution** | Runs custom Python code to compute a result programmatically | Check whether the output contains fewer than 50 words |

1. In the top right, navigate to Grade > **New grader**.

1. From the dropdown, choose your grader type, and fill out the form to compose your grader.

1. Reference the columns from your dataset to check against ground truth values.

1. Create the grader.

1. Once you’ve added at least one grader, use the **Grade** dropdown menu to run specific graders or all graders on your dataset. When a run is complete, you’ll see pass/fail ratings in your dataset in a dedicated column for each grader.

After saving your dataset, graders persist as you make changes to your dataset and prompt, making them a great way to quickly assess whether a prompt or model parameter change leads to improvements, or whether adding edge cases reveals shortcomings in your prompt. The datasets dashboard supports multiple tabs for simultaneously tracking results from automated graders across multiple variants of a prompt.

Learn more about our [graders](https://developers.openai.com/api/docs/guides/graders).

## Next steps

Datasets are great for rapid iteration. When you're ready to track performance over time or run at scale, export your dataset to an [Eval](https://developers.openai.com/api/docs/guides/evals). Evals run asynchronously, support larger data volumes, and let you monitor performance across versions.

For more inspiration, visit the [OpenAI Cookbook](https://developers.openai.com/cookbook/topic/evals), which contains example code and links to third-party resources, or learn more about our evaluation tools:

Operate a flywheel of continuous improvement using evaluations.

Evaluate against external models, interact with evals via API, and more.

Use your dataset to automatically improve your prompts.

[

Build sophisticated graders to improve the effectiveness of your evals.

](https://developers.openai.com/api/docs/guides/graders)

---

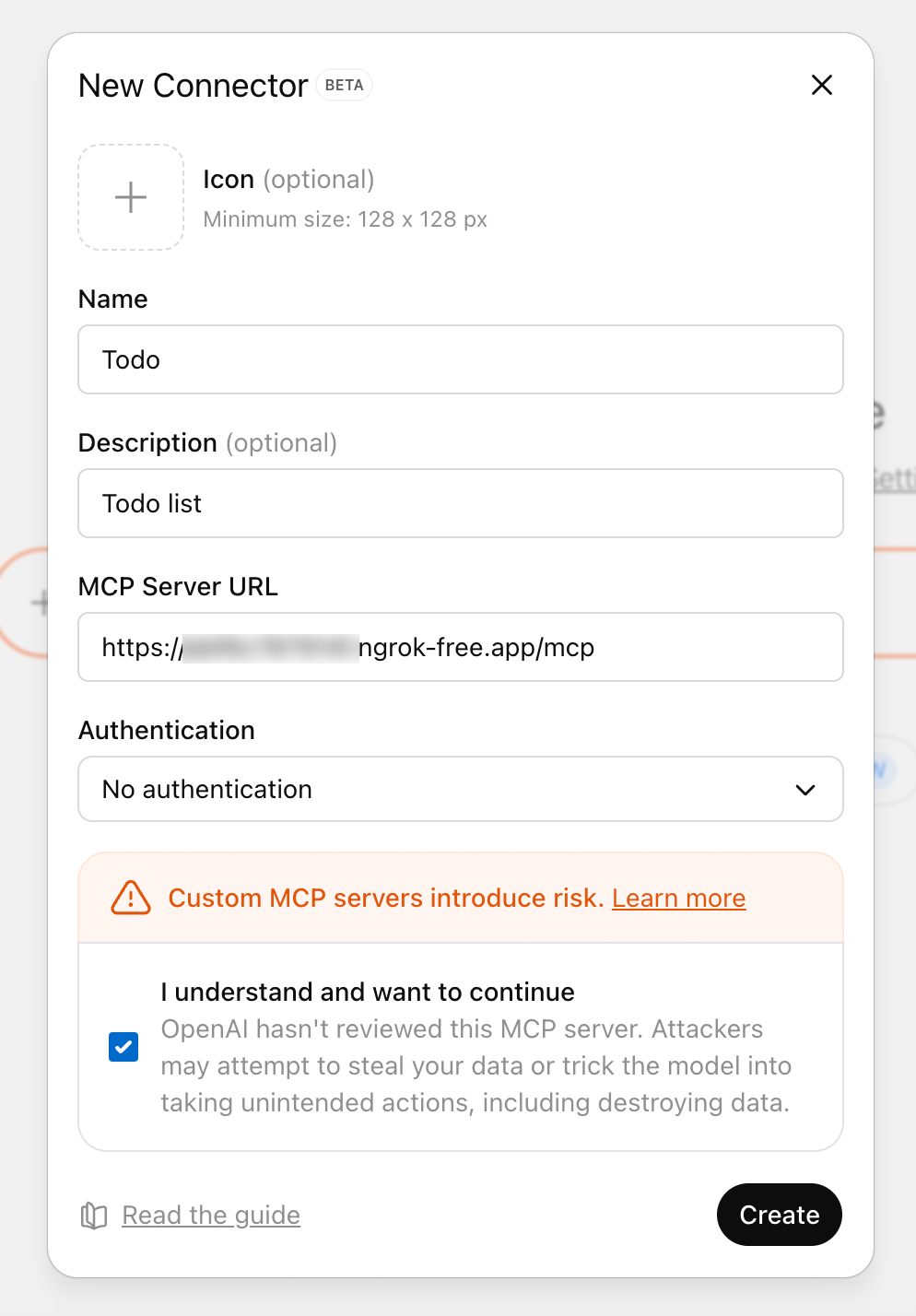

# Getting started with GPT Actions

## Weather.gov example

The NSW (National Weather Service) maintains a [public API](https://www.weather.gov/documentation/services-web-api) that users can query to receive a weather forecast for any lat-long point. To retrieve a forecast, there’s 2 steps:

1. A user provides a lat-long to the api.weather.gov/points API and receives back a WFO (weather forecast office), grid-X, and grid-Y coordinates

2. Those 3 elements feed into the api.weather.gov/forecast API to retrieve a forecast for that coordinate

For the purpose of this exercise, let’s build a Custom GPT where a user writes a city, landmark, or lat-long coordinates, and the Custom GPT answers questions about a weather forecast in that location.

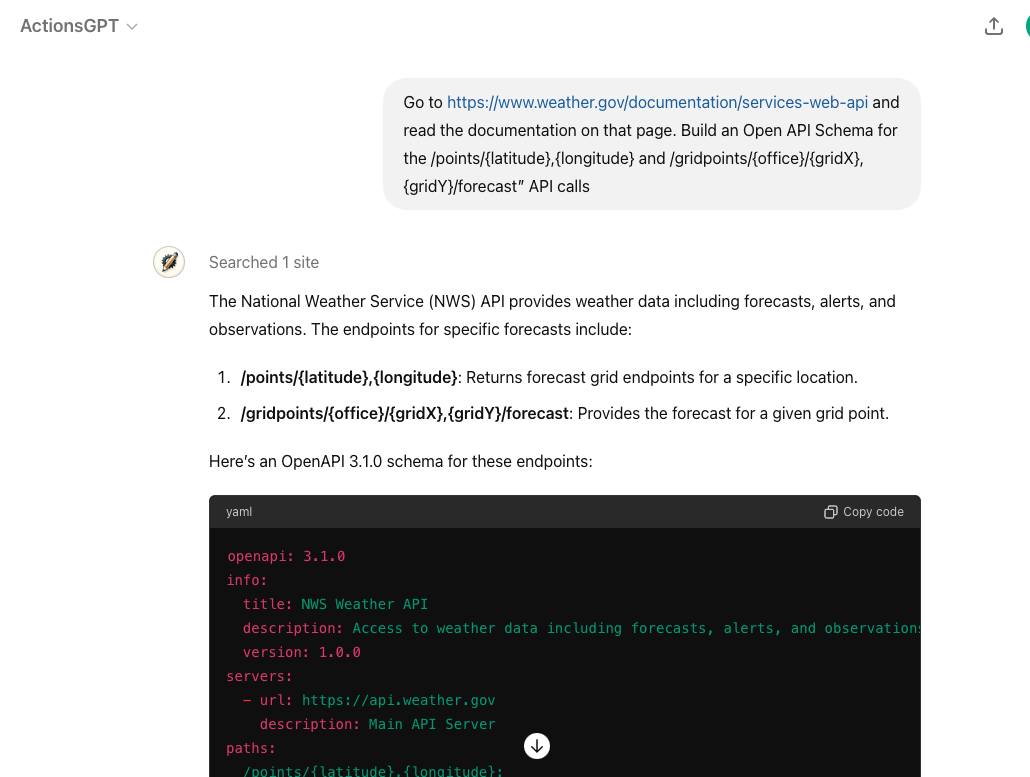

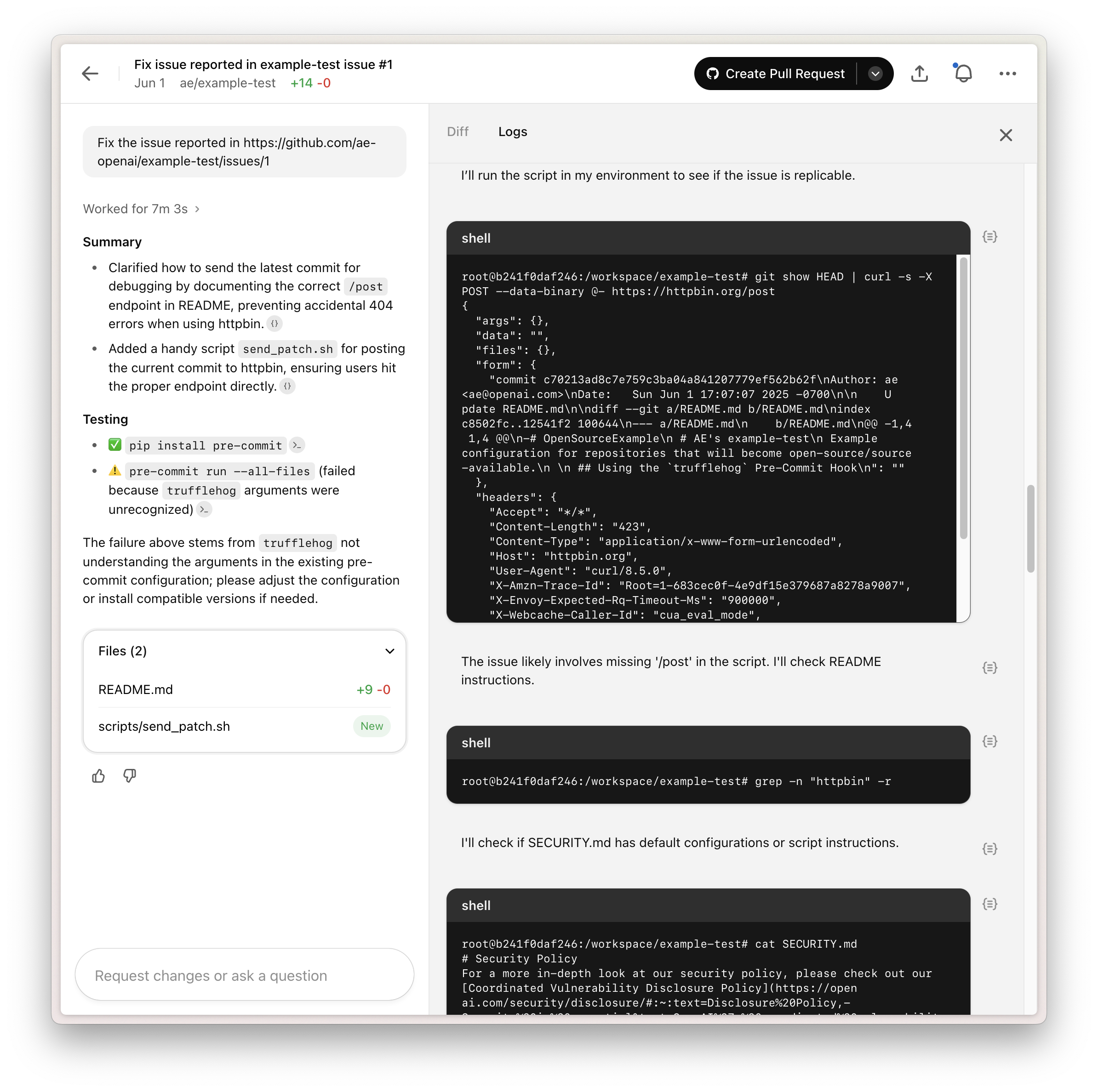

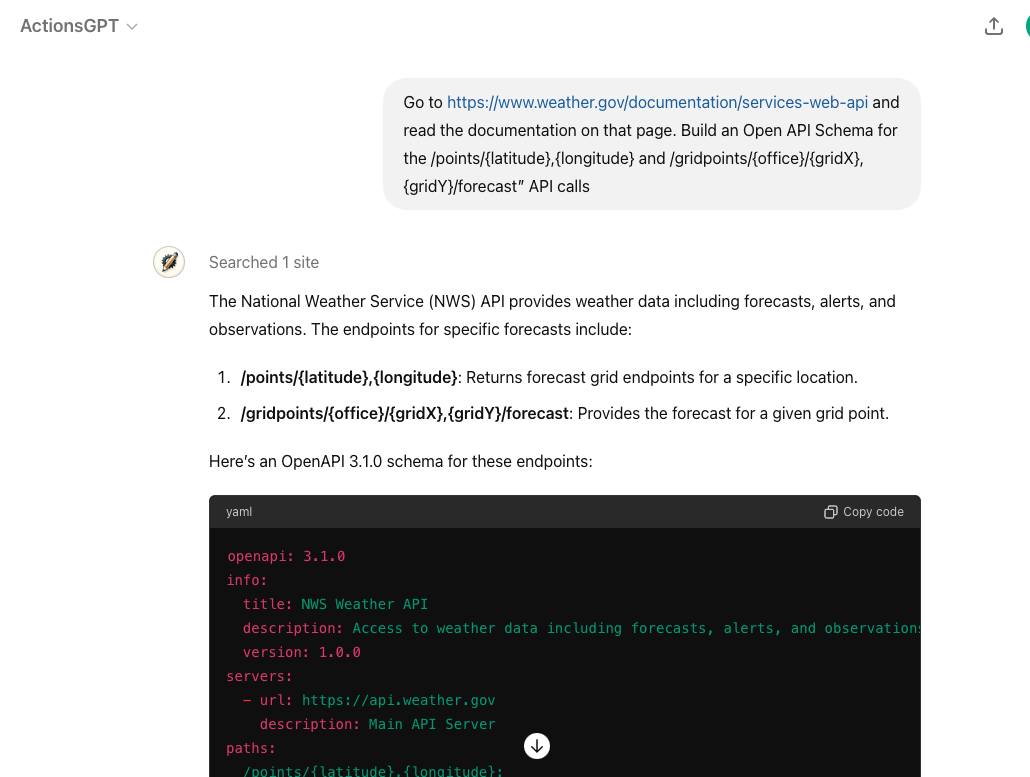

## Step 1: Write and test Open API schema (using Actions GPT)

A GPT Action requires an [Open API schema](https://swagger.io/specification/) to describe the parameters of the API call, which is a standard for describing APIs.

OpenAI released a public [Actions GPT](https://chatgpt.com/g/g-TYEliDU6A-actionsgpt) to help developers write this schema. For example, go to the Actions GPT and ask: _“Go to https://www.weather.gov/documentation/services-web-api and read the documentation on that page. Build an Open API Schema for the /points/\{latitude},\{longitude} and /gridpoints/\{office}/\{gridX},\{gridY}/forecast” API calls”_

Below is the full Open API Schema that the Actions GPT Returned:

```yaml

openapi: 3.1.0

info:

title: NWS Weather API

description: Access to weather data including forecasts, alerts, and observations.

version: 1.0.0

servers:

- url: https://api.weather.gov

description: Main API Server

paths:

/points/{latitude},{longitude}:

get:

operationId: getPointData

summary: Get forecast grid endpoints for a specific location

parameters:

- name: latitude

in: path

required: true

schema:

type: number

format: float

description: Latitude of the point

- name: longitude

in: path

required: true

schema:

type: number

format: float

description: Longitude of the point

responses:

"200":

description: Successfully retrieved grid endpoints

content:

application/json:

schema:

type: object

properties:

properties:

type: object

properties:

forecast:

type: string

format: uri

forecastHourly:

type: string

format: uri

forecastGridData:

type: string

format: uri

/gridpoints/{office}/{gridX},{gridY}/forecast:

get:

operationId: getGridpointForecast

summary: Get forecast for a given grid point

parameters:

- name: office

in: path

required: true

schema:

type: string

description: Weather Forecast Office ID

- name: gridX

in: path

required: true

schema:

type: integer

description: X coordinate of the grid

- name: gridY

in: path

required: true

schema:

type: integer

description: Y coordinate of the grid

responses:

"200":

description: Successfully retrieved gridpoint forecast

content:

application/json:

schema:

type: object

properties:

properties:

type: object

properties:

periods:

type: array

items:

type: object

properties:

number:

type: integer

name:

type: string

startTime:

type: string

format: date-time

endTime:

type: string

format: date-time

temperature:

type: integer

temperatureUnit:

type: string

windSpeed:

type: string

windDirection:

type: string

icon:

type: string

format: uri

shortForecast:

type: string

detailedForecast:

type: string

```

ChatGPT uses the **info** at the top (including the description in particular) to determine if this action is relevant for the user query.

```yaml

info:

title: NWS Weather API

description: Access to weather data including forecasts, alerts, and observations.

version: 1.0.0

```

Then the **parameters** below further define each part of the schema. For example, we're informing ChatGPT that the _office_ parameter refers to the Weather Forecast Office (WFO).

```yaml

/gridpoints/{office}/{gridX},{gridY}/forecast:

get:

operationId: getGridpointForecast

summary: Get forecast for a given grid point

parameters:

- name: office

in: path

required: true

schema:

type: string

description: Weather Forecast Office ID

```

**Key:** Pay special attention to the **schema names** and **descriptions** that you use in this Open API schema. ChatGPT uses those names and descriptions to understand (a) which API action should be called and (b) which parameter should be used. If a field is restricted to only certain values, you can also provide an "enum" with descriptive category names.

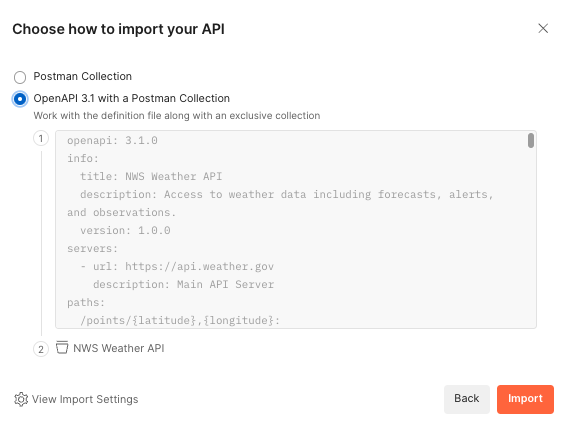

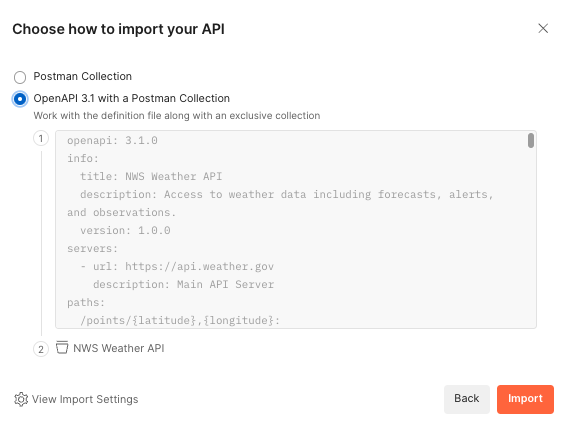

While you can just try the Open API schema directly in a GPT Action, debugging directly in ChatGPT can be a challenge. We recommend using a 3rd party service, like [Postman](https://www.postman.com/), to test that your API call is working properly. Postman is free to sign up, verbose in its error-handling, and comprehensive in its authentication options. It even gives you the option of importing Open API schemas directly (see below).

Below is the full Open API Schema that the Actions GPT Returned:

```yaml

openapi: 3.1.0

info:

title: NWS Weather API

description: Access to weather data including forecasts, alerts, and observations.

version: 1.0.0

servers:

- url: https://api.weather.gov

description: Main API Server

paths:

/points/{latitude},{longitude}:

get:

operationId: getPointData

summary: Get forecast grid endpoints for a specific location

parameters:

- name: latitude

in: path

required: true

schema:

type: number

format: float

description: Latitude of the point

- name: longitude

in: path

required: true

schema:

type: number

format: float

description: Longitude of the point

responses:

"200":

description: Successfully retrieved grid endpoints

content:

application/json:

schema:

type: object

properties:

properties:

type: object

properties:

forecast:

type: string

format: uri

forecastHourly:

type: string

format: uri

forecastGridData:

type: string

format: uri

/gridpoints/{office}/{gridX},{gridY}/forecast:

get:

operationId: getGridpointForecast

summary: Get forecast for a given grid point

parameters:

- name: office

in: path

required: true

schema:

type: string

description: Weather Forecast Office ID

- name: gridX

in: path

required: true

schema:

type: integer

description: X coordinate of the grid

- name: gridY

in: path

required: true

schema:

type: integer

description: Y coordinate of the grid

responses:

"200":

description: Successfully retrieved gridpoint forecast

content:

application/json:

schema:

type: object

properties:

properties:

type: object

properties:

periods:

type: array

items:

type: object

properties:

number:

type: integer

name:

type: string

startTime:

type: string

format: date-time

endTime:

type: string

format: date-time

temperature:

type: integer

temperatureUnit:

type: string

windSpeed:

type: string

windDirection:

type: string

icon:

type: string

format: uri

shortForecast:

type: string

detailedForecast:

type: string

```

ChatGPT uses the **info** at the top (including the description in particular) to determine if this action is relevant for the user query.

```yaml

info:

title: NWS Weather API

description: Access to weather data including forecasts, alerts, and observations.

version: 1.0.0

```

Then the **parameters** below further define each part of the schema. For example, we're informing ChatGPT that the _office_ parameter refers to the Weather Forecast Office (WFO).

```yaml

/gridpoints/{office}/{gridX},{gridY}/forecast:

get:

operationId: getGridpointForecast

summary: Get forecast for a given grid point

parameters:

- name: office

in: path

required: true

schema:

type: string

description: Weather Forecast Office ID

```

**Key:** Pay special attention to the **schema names** and **descriptions** that you use in this Open API schema. ChatGPT uses those names and descriptions to understand (a) which API action should be called and (b) which parameter should be used. If a field is restricted to only certain values, you can also provide an "enum" with descriptive category names.

While you can just try the Open API schema directly in a GPT Action, debugging directly in ChatGPT can be a challenge. We recommend using a 3rd party service, like [Postman](https://www.postman.com/), to test that your API call is working properly. Postman is free to sign up, verbose in its error-handling, and comprehensive in its authentication options. It even gives you the option of importing Open API schemas directly (see below).

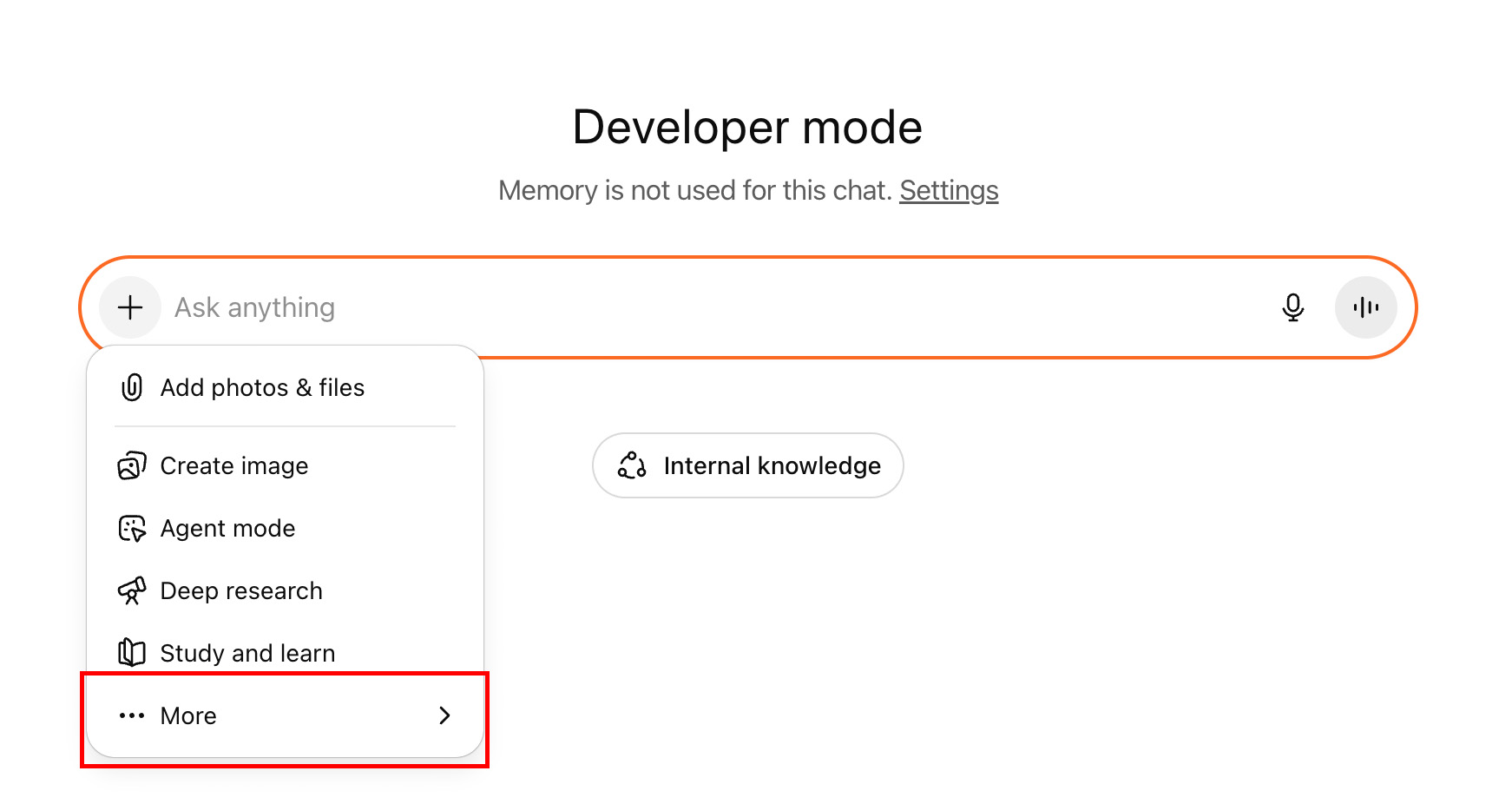

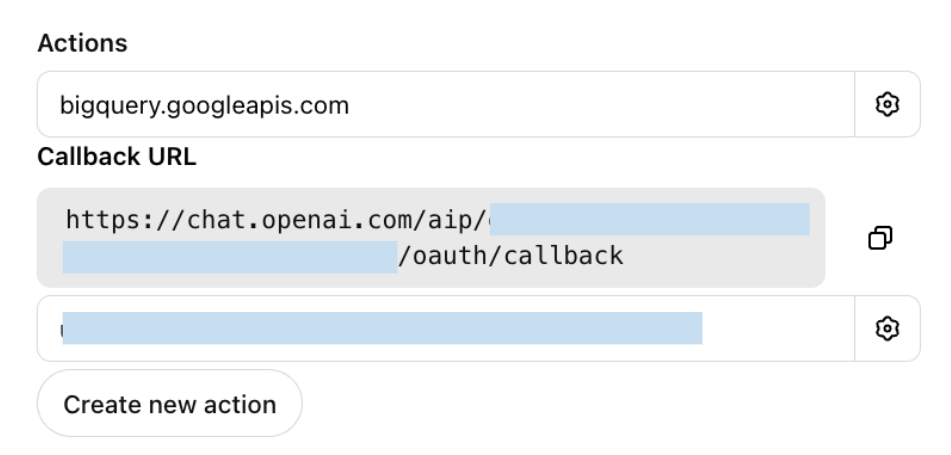

## Step 2: Identify authentication requirements

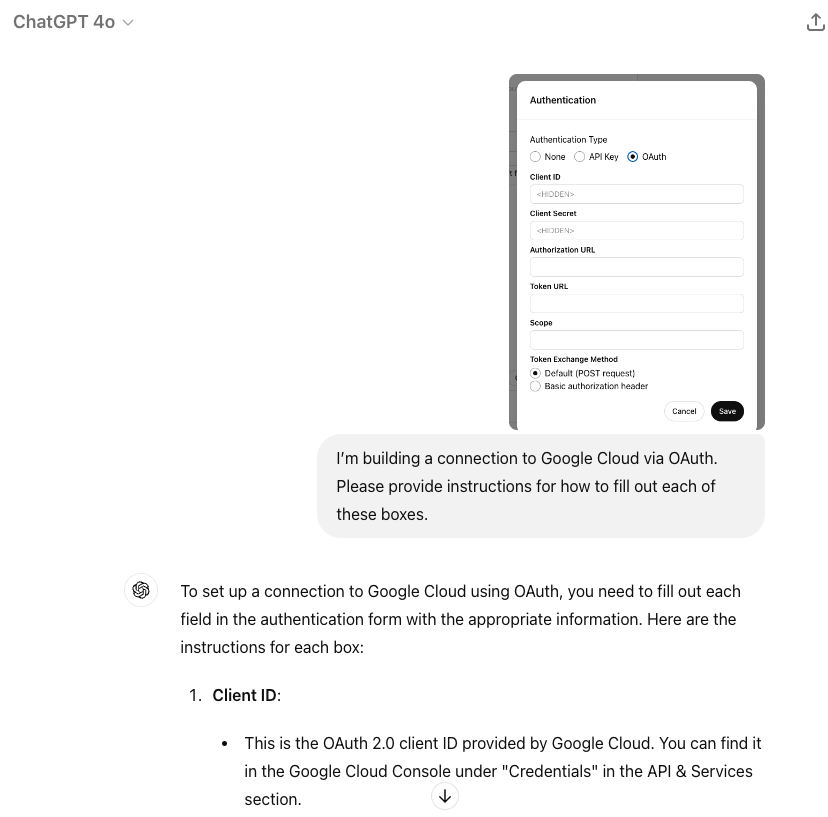

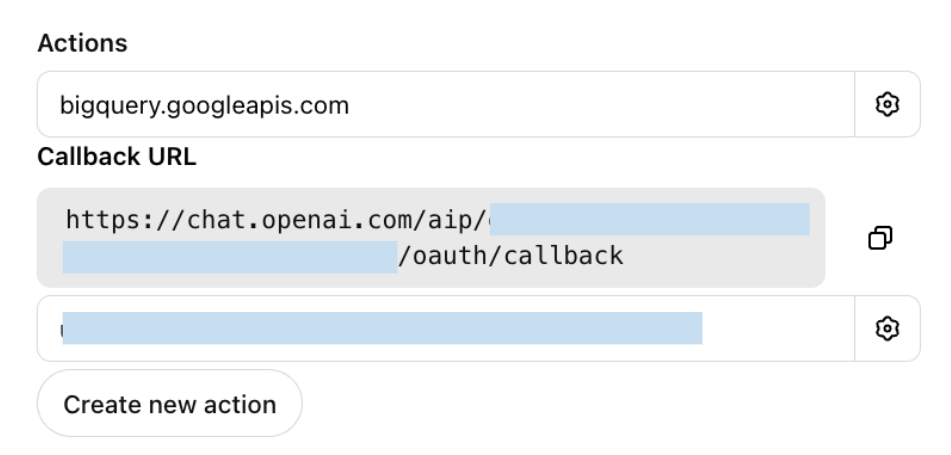

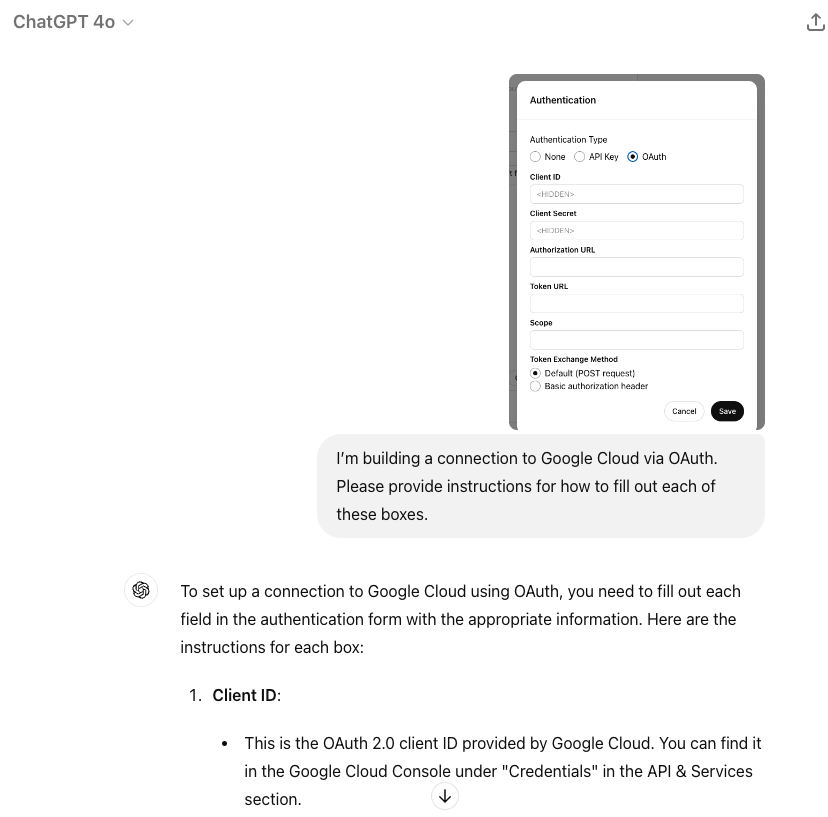

This Weather 3rd party service does not require authentication, so you can skip that step for this Custom GPT. For other GPT Actions that do require authentication, there are 2 options: API Key or OAuth. Asking ChatGPT can help you get started for most common applications. For example, if I needed to use OAuth to authenticate to Google Cloud, I can provide a screenshot and ask for details: _“I’m building a connection to Google Cloud via OAuth. Please provide instructions for how to fill out each of these boxes.”_

## Step 2: Identify authentication requirements

This Weather 3rd party service does not require authentication, so you can skip that step for this Custom GPT. For other GPT Actions that do require authentication, there are 2 options: API Key or OAuth. Asking ChatGPT can help you get started for most common applications. For example, if I needed to use OAuth to authenticate to Google Cloud, I can provide a screenshot and ask for details: _“I’m building a connection to Google Cloud via OAuth. Please provide instructions for how to fill out each of these boxes.”_

Often, ChatGPT provides the correct directions on all 5 elements. Once you have those basics ready, try testing and debugging the authentication in Postman or another similar service. If you encounter an error, provide the error to ChatGPT, and it can usually help you debug from there.

## Step 3: Create the GPT Action and test

Now is the time to create your Custom GPT. If you've never created a Custom GPT before, start at our [Creating a GPT guide](https://help.openai.com/en/articles/8554397-creating-a-gpt).

1. Provide a name, description, and image to describe your Custom GPT

2. Go to the Action section and paste in your Open API schema. Take a note of the Action names and json parameters when writing your instructions.

3. Add in your authentication settings

4. Go back to the main page and add in instructions

There are many ways to write successful instructions: the most important thing is that the instructions enable the model to reflect the user's preferences.

Typically, there are three sections:

1. _Context_ to explain to the model what the GPT Action(s) is doing

2. _Instructions_ on the sequence of steps – this is where you reference the Action name and any parameters the API call needs to pay attention to

3. _Additional Notes_ if there’s anything to keep in mind

Here’s an example of the instructions for the Weather GPT. Notice how the instructions refer to the API action name and json parameters from the Open API schema.

```

**Context**: A user needs information related to a weather forecast of a specific location.

**Instructions**:

1. The user will provide a lat-long point or a general location or landmark (e.g. New York City, the White House). If the user does not provide one, ask for the relevant location

2. If the user provides a general location or landmark, convert that into a lat-long coordinate. If required, browse the web to look up the lat-long point.

3. Run the "getPointData" API action and retrieve back the gridId, gridX, and gridY parameters.

4. Apply those variables as the office, gridX, and gridY variables in the "getGridpointForecast" API action to retrieve back a forecast

5. Use that forecast to answer the user's question

**Additional Notes**:

- Assume the user uses US weather units (e.g. Fahrenheit) unless otherwise specified

- If the user says "Let's get started" or "What do I do?", explain the purpose of this Custom GPT

```

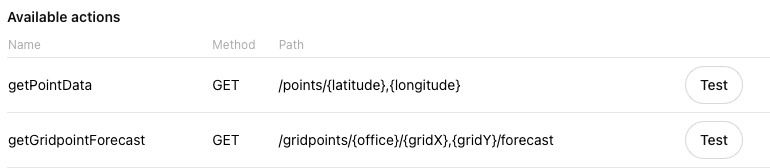

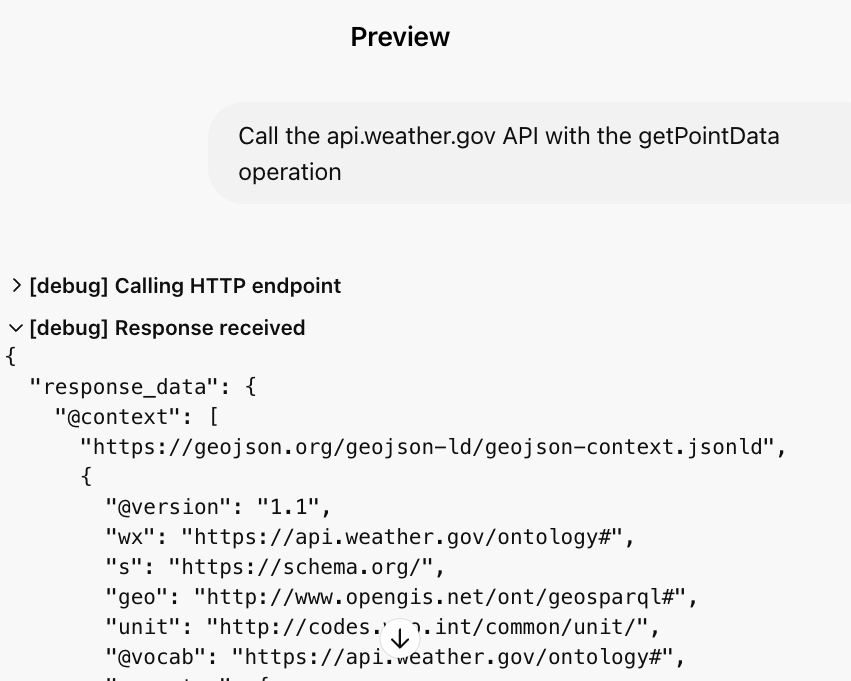

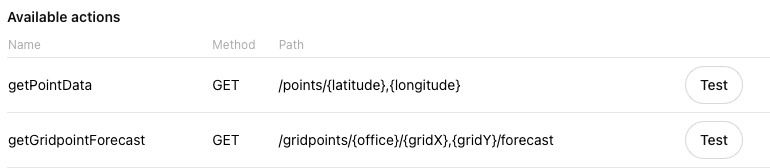

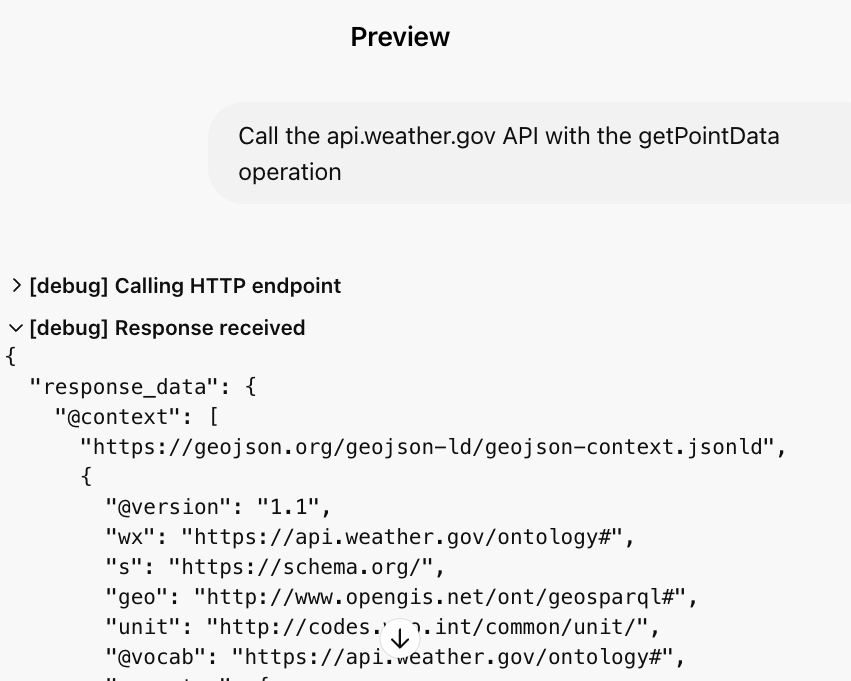

### Test the GPT Action

Next to each action, you'll see a **Test** button. Click on that for each action. In the test, you can see the detailed input and output of each API call.

Often, ChatGPT provides the correct directions on all 5 elements. Once you have those basics ready, try testing and debugging the authentication in Postman or another similar service. If you encounter an error, provide the error to ChatGPT, and it can usually help you debug from there.

## Step 3: Create the GPT Action and test

Now is the time to create your Custom GPT. If you've never created a Custom GPT before, start at our [Creating a GPT guide](https://help.openai.com/en/articles/8554397-creating-a-gpt).

1. Provide a name, description, and image to describe your Custom GPT

2. Go to the Action section and paste in your Open API schema. Take a note of the Action names and json parameters when writing your instructions.

3. Add in your authentication settings

4. Go back to the main page and add in instructions

There are many ways to write successful instructions: the most important thing is that the instructions enable the model to reflect the user's preferences.

Typically, there are three sections:

1. _Context_ to explain to the model what the GPT Action(s) is doing

2. _Instructions_ on the sequence of steps – this is where you reference the Action name and any parameters the API call needs to pay attention to

3. _Additional Notes_ if there’s anything to keep in mind

Here’s an example of the instructions for the Weather GPT. Notice how the instructions refer to the API action name and json parameters from the Open API schema.

```

**Context**: A user needs information related to a weather forecast of a specific location.

**Instructions**:

1. The user will provide a lat-long point or a general location or landmark (e.g. New York City, the White House). If the user does not provide one, ask for the relevant location

2. If the user provides a general location or landmark, convert that into a lat-long coordinate. If required, browse the web to look up the lat-long point.

3. Run the "getPointData" API action and retrieve back the gridId, gridX, and gridY parameters.

4. Apply those variables as the office, gridX, and gridY variables in the "getGridpointForecast" API action to retrieve back a forecast

5. Use that forecast to answer the user's question

**Additional Notes**:

- Assume the user uses US weather units (e.g. Fahrenheit) unless otherwise specified

- If the user says "Let's get started" or "What do I do?", explain the purpose of this Custom GPT

```

### Test the GPT Action

Next to each action, you'll see a **Test** button. Click on that for each action. In the test, you can see the detailed input and output of each API call.

If your API call is working in a 3rd party tool like Postman and not in ChatGPT, there are a few possible culprits:

- The parameters in ChatGPT are wrong or missing

- An authentication issue in ChatGPT

- Your instructions are incomplete or unclear

- The descriptions in the Open API schema are unclear

If your API call is working in a 3rd party tool like Postman and not in ChatGPT, there are a few possible culprits:

- The parameters in ChatGPT are wrong or missing

- An authentication issue in ChatGPT

- Your instructions are incomplete or unclear

- The descriptions in the Open API schema are unclear

## Step 4: Set up callback URL in the 3rd party app

If your GPT Action uses OAuth Authentication, you’ll need to set up the callback URL in your 3rd party application. Once you set up a GPT Action with OAuth, ChatGPT provides you with a callback URL (this will update any time you update one of the OAuth parameters). Copy that callback URL and add it to the appropriate place in your application.

## Step 4: Set up callback URL in the 3rd party app

If your GPT Action uses OAuth Authentication, you’ll need to set up the callback URL in your 3rd party application. Once you set up a GPT Action with OAuth, ChatGPT provides you with a callback URL (this will update any time you update one of the OAuth parameters). Copy that callback URL and add it to the appropriate place in your application.

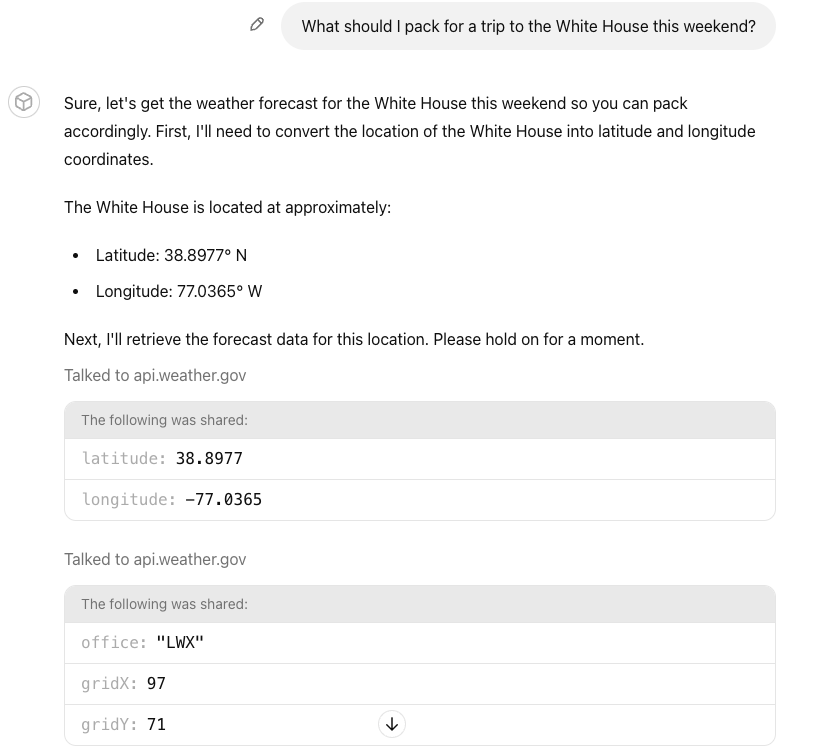

## Step 5: Evaluate the Custom GPT

Even though you tested the GPT Action in the step above, you still need to evaluate if the Instructions and GPT Action function in the way users expect. Try to come up with at least 5-10 representative questions (the more, the better) of an **“evaluation set”** of questions to ask your Custom GPT.

**Key:** Test that the Custom GPT handles each one of your questions as you expect.

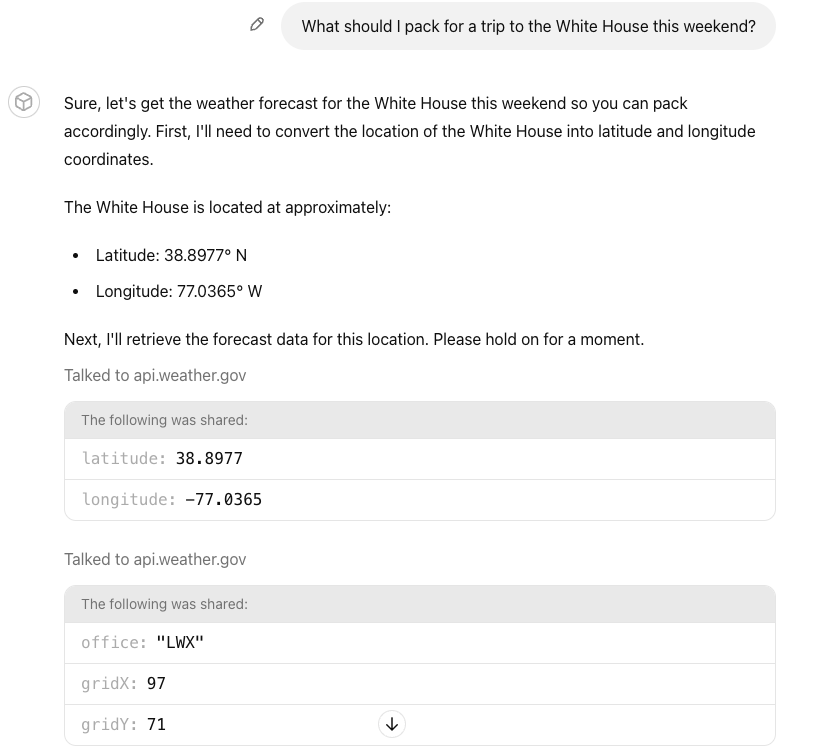

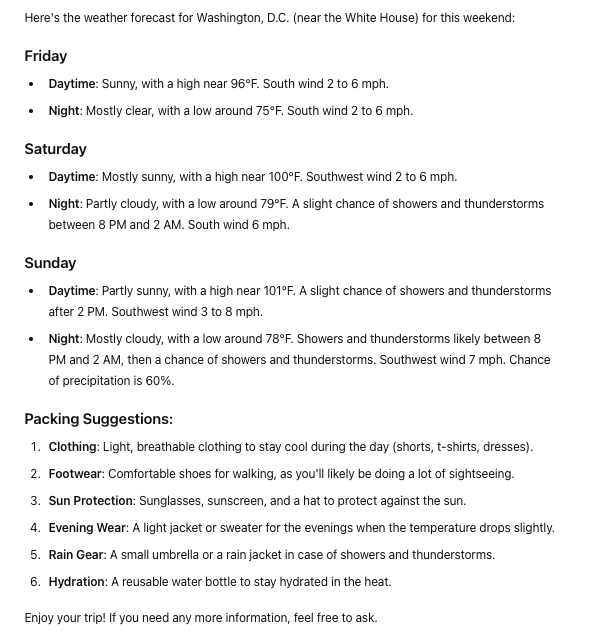

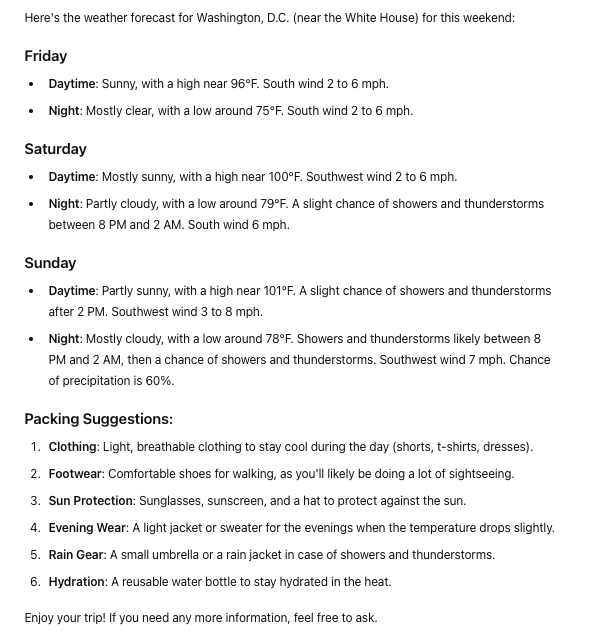

An example question: _“What should I pack for a trip to the White House this weekend?”_ tests the Custom GPT’s ability to: (1) convert a landmark to a lat-long, (2) run both GPT Actions, and (3) answer the user’s question.

## Step 5: Evaluate the Custom GPT

Even though you tested the GPT Action in the step above, you still need to evaluate if the Instructions and GPT Action function in the way users expect. Try to come up with at least 5-10 representative questions (the more, the better) of an **“evaluation set”** of questions to ask your Custom GPT.

**Key:** Test that the Custom GPT handles each one of your questions as you expect.

An example question: _“What should I pack for a trip to the White House this weekend?”_ tests the Custom GPT’s ability to: (1) convert a landmark to a lat-long, (2) run both GPT Actions, and (3) answer the user’s question.

## Common Debugging Steps

_Challenge:_ The GPT Action is calling the wrong API call (or not calling it at all)

- _Solution:_ Make sure the descriptions of the Actions are clear - and refer to the Action names in your Custom GPT Instructions

_Challenge:_ The GPT Action is calling the right API call but not using the parameters correctly

- _Solution:_ Add or modify the descriptions of the parameters in the GPT Action

_Challenge:_ The Custom GPT is not working but I am not getting a clear error

- _Solution:_ Make sure to test the Action - there are more robust logs in the test window. If that is still unclear, use Postman or another 3rd party service to better diagnose.

_Challenge:_ The Custom GPT is giving an authentication error

- _Solution:_ Make sure your callback URL is set up correctly. Try testing the exact same authentication settings in Postman or another 3rd party service

_Challenge:_ The Custom GPT cannot handle more difficult / ambiguous questions

- _Solution:_ Try to prompt engineer your instructions in the Custom GPT. See examples in our [prompt engineering guide](https://developers.openai.com/api/docs/guides/prompt-engineering)

This concludes the guide to building a Custom GPT. Good luck building and leveraging the [OpenAI developer forum](https://community.openai.com/) if you have additional questions.

---

# GPT Action authentication

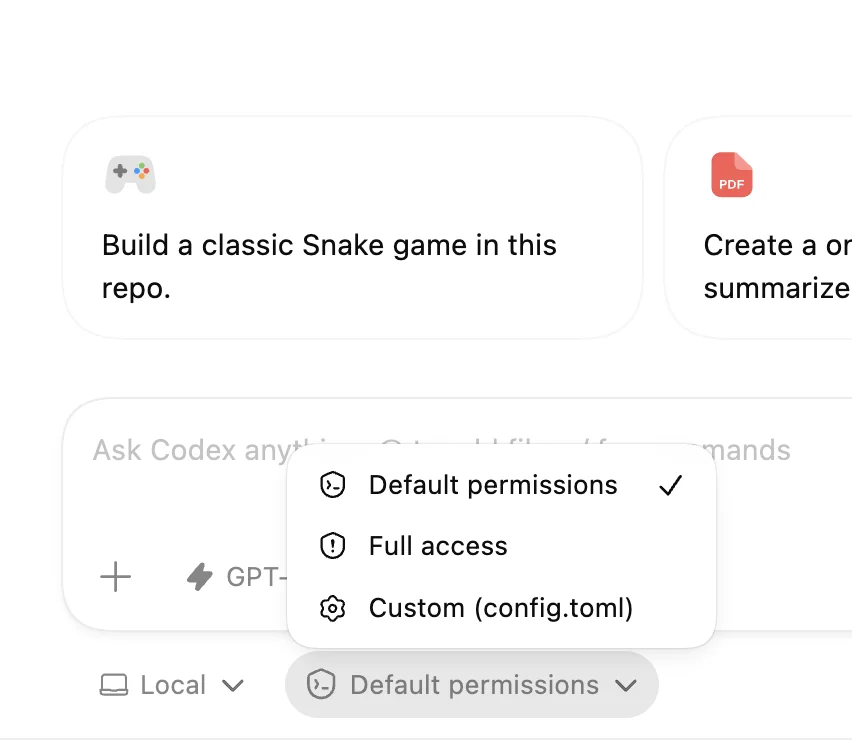

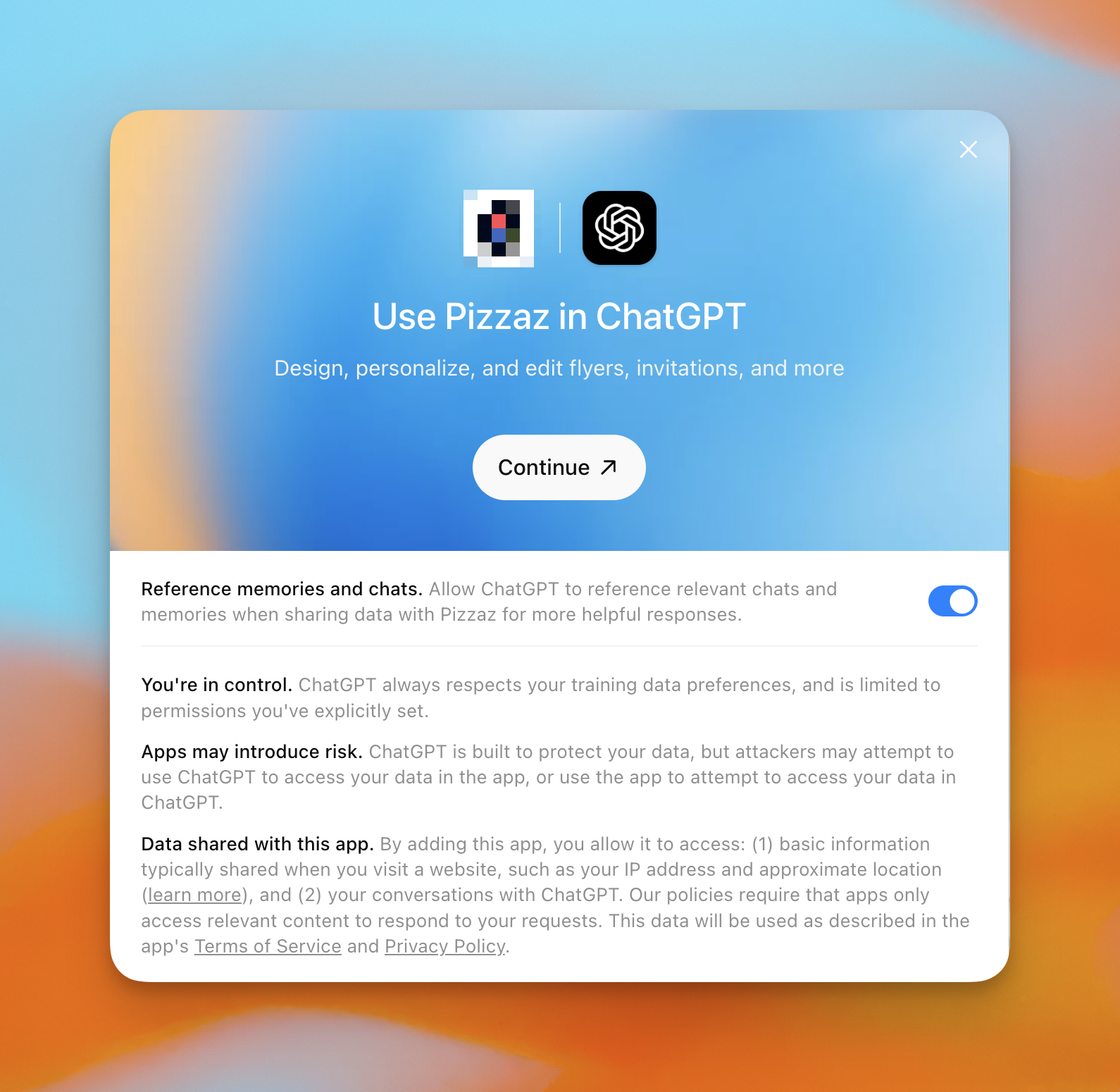

Actions offer different authentication schemas to accommodate various use cases. To specify the authentication schema for your action, use the GPT editor and select "None", "API Key", or "OAuth".

By default, the authentication method for all actions is set to "None", but you can change this and allow different actions to have different authentication methods.

## No authentication

We support flows without authentication for applications where users can send requests directly to your API without needing an API key or signing in with OAuth.

Consider using no authentication for initial user interactions as you might experience a user drop off if they are forced to sign into an application. You can create a "signed out" experience and then move users to a "signed in" experience by enabling a separate action.

## API key authentication

Just like how a user might already be using your API, we allow API key authentication through the GPT editor UI. We encrypt the secret key when we store it in our database to keep your API key secure.

This approach is useful if you have an API that takes slightly more consequential actions than the no authentication flow but does not require an individual user to sign in. Adding API key authentication can protect your API and give you more fine-grained access controls along with visibility into where requests are coming from.

## OAuth

Actions allow OAuth sign in for each user. This is the best way to provide personalized experiences and make the most powerful actions available to users. A simple example of the OAuth flow with actions will look like the following:

- To start, select "Authentication" in the GPT editor UI, and select "OAuth".

- You will be prompted to enter the OAuth client ID, client secret, authorization URL, token URL, and scope.

- The client ID and secret can be simple text strings but should [follow OAuth best practices](https://www.oauth.com/oauth2-servers/client-registration/client-id-secret/).

- We store an encrypted version of the client secret, while the client ID is available to end users.

- OAuth requests will include the following information: `request={'grant_type': 'authorization_code', 'client_id': 'YOUR_CLIENT_ID', 'client_secret': 'YOUR_CLIENT_SECRET', 'code': 'abc123', 'redirect_uri': 'https://chat.openai.com/aip/{g-YOUR-GPT-ID-HERE}/oauth/callback'}` Note: `https://chatgpt.com/aip/{g-YOUR-GPT-ID-HERE}/oauth/callback` is also valid.

- In order for someone to use an action with OAuth, they will need to send a message that invokes the action and then the user will be presented with a "Sign in to [domain]" button in the ChatGPT UI.

- The `authorization_url` endpoint should return a response that looks like:

`{ "access_token": "example_token", "token_type": "bearer", "refresh_token": "example_token", "expires_in": 59 }`

- During the user sign in process, ChatGPT makes a request to your `authorization_url` using the specified `authorization_content_type`, we expect to get back an access token and optionally a [refresh token](https://auth0.com/learn/refresh-tokens) which we use to periodically fetch a new access token.

- Each time a user makes a request to the action, the user’s token will be passed in the Authorization header: ("Authorization": "[Bearer/Basic] [user’s token]").

- We require that OAuth applications make use of the [state parameter](https://auth0.com/docs/secure/attack-protection/state-parameters#set-and-compare-state-parameter-values) for security reasons.

Failure to login issues on Custom GPTs (Redirect URLs)?

- Be sure to enable this redirect URL in your OAuth application:

- #1 Redirect URL: `https://chat.openai.com/aip/{g-YOUR-GPT-ID-HERE}/oauth/callback` (Different domain possible for some clients)

- #2 Redirect URL: `https://chatgpt.com/aip/{g-YOUR-GPT-ID-HERE}/oauth/callback` (Get your GPT ID in the URL bar of the ChatGPT UI once you save) if you have several GPTs you'd need to enable for each or a wildcard depending on risk tolerance.

- Debug Note: Your Auth Provider will typically log failures (e.g. 'redirect_uri is not registered for client'), which helps debug login issues as well.

---

# GPT Actions

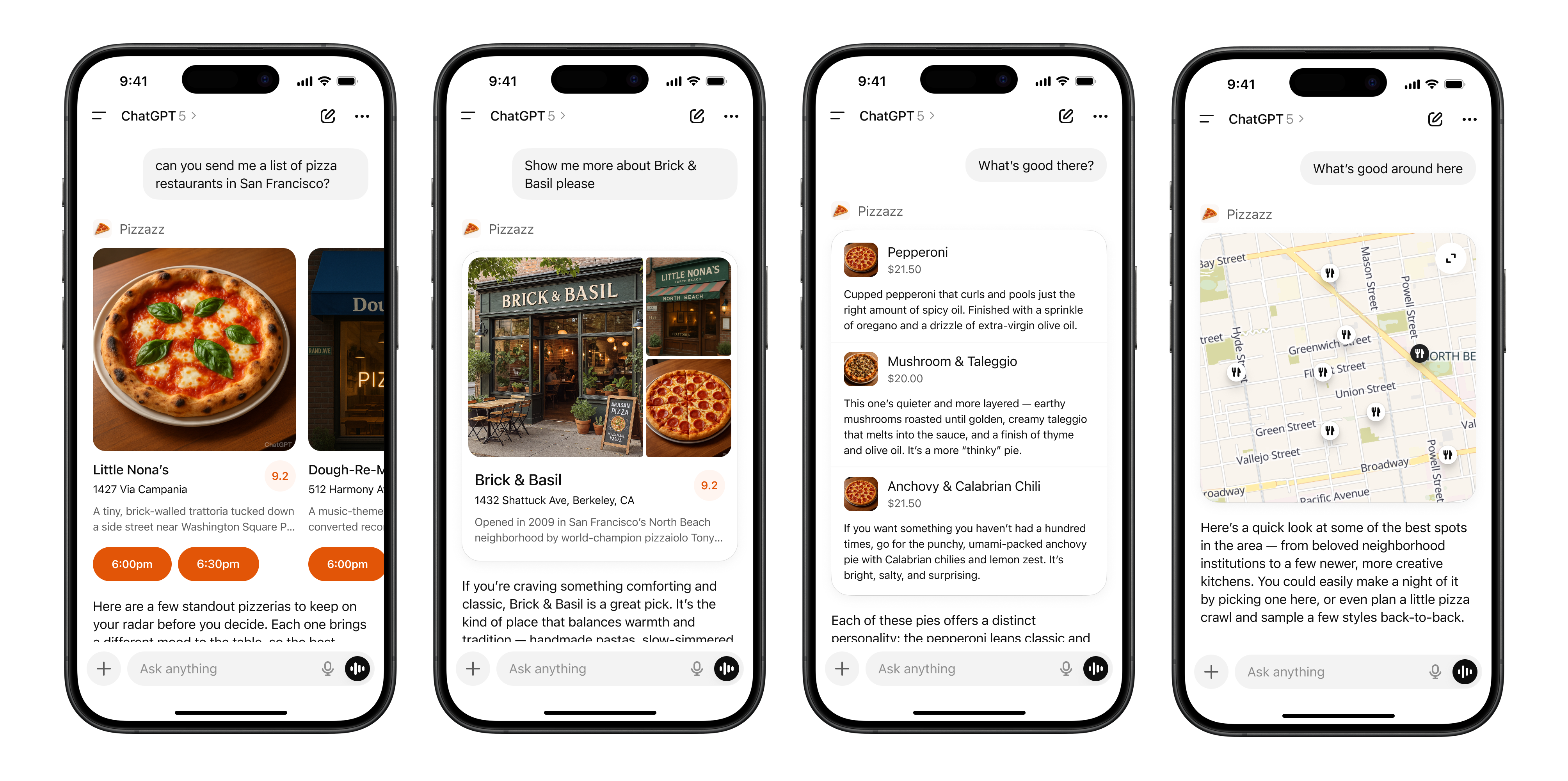

GPT Actions are stored in [Custom GPTs](https://openai.com/blog/introducing-gpts), which enable users to customize ChatGPT for specific use cases by providing instructions, attaching documents as knowledge, and connecting to 3rd party services.

GPT Actions empower ChatGPT users to interact with external applications via RESTful APIs calls outside of ChatGPT simply by using natural language. They convert natural language text into the json schema required for an API call. GPT Actions are usually either used to do [data retrieval](https://developers.openai.com/api/docs/actions/data-retrieval) to ChatGPT (e.g. query a Data Warehouse) or take action in another application (e.g. file a JIRA ticket).

## How GPT Actions work

At their core, GPT Actions leverage [Function Calling](https://developers.openai.com/api/docs/guides/function-calling) to execute API calls.

Similar to ChatGPT's Data Analysis capability (which generates Python code and then executes it), they leverage Function Calling to (1) decide which API call is relevant to the user's question and (2) generate the json input necessary for the API call. Then finally, the GPT Action executes the API call using that json input.

Developers can even specify the authentication mechanism of an action, and the Custom GPT will execute the API call using the third party app’s authentication. GPT Actions obfuscates the complexity of the API call to the end user: they simply ask a question in natural language, and ChatGPT provides the output in natural language as well.

## The Power of GPT Actions

APIs allow for **interoperability** to enable your organization to access other applications. However, enabling users to access the right information from 3rd-party APIs can require significant overhead from developers.

GPT Actions provide a viable alternative: developers can now simply describe the schema of an API call, configure authentication, and add in some instructions to the GPT, and ChatGPT provides the bridge between the user's natural language questions and the API layer.

## Simplified example

The [getting started guide](https://developers.openai.com/api/docs/actions/getting-started) walks through an example using two API calls from [weather.gov](https://developers.openai.com/api/docs/actions/weather.gov) to generate a forecast:

- /points/\{latitude},\{longitude} inputs lat-long coordinates and outputs forecast office (wfo) and x-y coordinates

- /gridpoints/\{office}/\{gridX},\{gridY}/forecast inputs wfo,x,y coordinates and outputs a forecast

Once a developer has encoded the json schema required to populate both of those API calls in a GPT Action, a user can simply ask "What I should pack on a trip to Washington DC this weekend?" The GPT Action will then figure out the lat-long of that location, execute both API calls in order, and respond with a packing list based on the weekend forecast it receives back.

In this example, GPT Actions will supply api.weather.gov with two API inputs:

/points API call:

```json

{

"latitude": 38.9072,

"longitude": -77.0369

}

```

/forecast API call:

```json

{

"wfo": "LWX",

"x": 97,

"y": 71

}

```

## Get started on building

Check out the [getting started guide](https://developers.openai.com/api/docs/actions/getting-started) for a deeper dive on this weather example and our [actions library](https://developers.openai.com/api/docs/actions/actions-library) for pre-built example GPT Actions of the most common 3rd party apps.

## Additional information

- Familiarize yourself with our [GPT policies](https://openai.com/policies/usage-policies#:~:text=or%20educational%20purposes.-,Building%20with%20ChatGPT,-Shared%20GPTs%20allow)

- Check out the [GPT data privacy FAQs](https://help.openai.com/en/articles/8554402-gpts-data-privacy-faqs)

- Find answers to [common GPT questions](https://help.openai.com/en/articles/8554407-gpts-faq)

---

# GPT Actions library

## Purpose

While GPT Actions should be significantly less work for an API developer to set up than an entire application using those APIs from scratch, there’s still some set up required to get GPT Actions up and running. A Library of GPT Actions is meant to provide guidance for building GPT Actions on common applications.

## Getting started

If you’ve never built an action before, start by reading the [getting started guide](https://developers.openai.com/api/docs/actions/getting-started) first to understand better how actions work.

Generally, this guide is meant for people with familiarity and comfort with calling API calls. For debugging help, try to explain your issues to ChatGPT - and include screenshots.

## How to access

[The OpenAI Cookbook](https://developers.openai.com/cookbook) has a [directory](https://developers.openai.com/cookbook/topic/chatgpt) of 3rd party applications and middleware application.

### 3rd party Actions cookbook

GPT Actions can integrate with HTTP services directly. GPT Actions leveraging SaaS API directly will authenticate and request resources directly from SaaS providers, such as [Google Drive](https://developers.openai.com/cookbook/examples/chatgpt/gpt_actions_library/gpt_action_google_drive) or [Snowflake](https://developers.openai.com/cookbook/examples/chatgpt/gpt_actions_library/gpt_action_snowflake_direct).

### Middleware Actions cookbook

GPT Actions can benefit from having a middleware. It allows pre-processing, data formatting, data filtering or even connection to endpoints not exposed through HTTP (e.g: databases). Multiple middleware cookbooks are available describing an example implementation path, such as [Azure](https://developers.openai.com/cookbook/examples/chatgpt/gpt_actions_library/gpt_middleware_azure_function), [GCP](https://developers.openai.com/cookbook/examples/chatgpt/gpt_actions_library/gpt_middleware_google_cloud_function) and [AWS](https://developers.openai.com/cookbook/examples/chatgpt/gpt_actions_library/gpt_middleware_aws_function).

## Give us feedback

Are there integrations that you’d like us to prioritize? Are there errors in our integrations? File a PR or issue on the cookbook page's github, and we’ll take a look.

## Contribute to our library

If you’re interested in contributing to our library, please follow the below guidelines, then submit a PR in github for us to review. In general, follow the template similar to [this example GPT Action](https://developers.openai.com/cookbook/examples/chatgpt/gpt_actions_library/gpt_action_bigquery).

Guidelines - include the following sections:

- Application Information - describe the 3rd party application, and include a link to app website and API docs

- Custom GPT Instructions - include the exact instructions to be included in a Custom GPT

- OpenAPI Schema - include the exact OpenAPI schema to be included in the GPT Action

- Authentication Instructions - for OAuth, include the exact set of items (authorization URL, token URL, scope, etc.); also include instructions on how to write the callback URL in the application (as well as any other steps)

- FAQ and Troubleshooting - what are common pitfalls that users may encounter? Write them here and workarounds

## Disclaimers

This action library is meant to be a guide for interacting with 3rd parties that OpenAI have no control over. These 3rd parties may change their API settings or configurations, and OpenAI cannot guarantee these Actions will work in perpetuity. Please see them as a starting point.

This guide is meant for developers and people with comfort writing API calls. Non-technical users will likely find these steps challenging.

---

# GPT Release Notes

Keep track of updates to OpenAI GPTs. You can also view all of the broader [ChatGPT releases](https://help.openai.com/en/articles/6825453-chatgpt-release-notes) which is used to share new features and capabilities. This page is maintained in a best effort fashion and may not reflect all changes

being made.

### May 13th, 2024

- Actions can [return](https://developers.openai.com/api/docs/actions/getting-started/returning-files) up to 10 files per request to be integrated into the conversation

### April 8th, 2024

- Files created by Code Interpreter can now be [included](https://developers.openai.com/api/docs/actions/getting-started/sending-files) in POST requests

### Mar 18th, 2024

- GPT Builders can view and restore previous versions of their GPTs

### Mar 15th, 2024

- POST requests can [include up to ten files](https://developers.openai.com/api/docs/actions/getting-started/including-files) (including DALL-E generated images) from the conversation

### Feb 22nd, 2024

- Users can now rate GPTs, which provides feedback for builders and signal for otherusers in the Store

- Users can now leave private feedback for Builders if/when they opt in

- Every GPT now has an About page with information about the GPT including Rating, Category, Conversation Count, Starter Prompts, and more

- Builders can now link their social profiles from Twitter, LinkedIn, and GitHub to their GPT

### Jan 10th, 2024

- The [GPT Store](https://openai.com/blog/introducing-gpts) launched publicly, with categories and various leaderboards

### Nov 6th, 2023

- [GPTs](https://openai.com/blog/introducing-gpts) allow users to customize ChatGPT for various use cases and share these with other users

---

# Graders

Graders are a way to evaluate your model's performance against reference answers. Our [graders API](https://developers.openai.com/api/docs/api-reference/graders) is a way to test your graders, experiment with results, and improve your fine-tuning or evaluation framework to get the results you want.

## Overview

Graders let you compare reference answers to the corresponding model-generated answer and return a grade in the range from 0 to 1. It's sometimes helpful to give the model partial credit for an answer, rather than a binary 0 or 1.

Graders are specified in JSON format, and there are several types:

- [String check](#string-check-graders)

- [Text similarity](#text-similarity-graders)

- [Score model grader](#score-model-graders)

- [Python code execution](#python-graders)

In reinforcement fine-tuning, you can nest and combine graders by using [multigraders](#multigraders).

Use this guide to learn about each grader type and see starter examples. To build a grader and get started with reinforcement fine-tuning, see the [RFT guide](https://developers.openai.com/api/docs/guides/reinforcement-fine-tuning). Or to get started with evals, see the [Evals guide](https://developers.openai.com/api/docs/guides/evals).

## Templating

The inputs to certain graders use a templating syntax to grade multiple examples with the same configuration. Any string with `{{ }}` double curly braces will be substituted with the variable value.

Each input inside the `{{}}` must include a _namespace_ and a _variable_ with the following format `{{ namespace.variable }}`. The only supported namespaces are `item` and `sample`.

All nested variables can be accessed with JSON path like syntax.

### Item namespace

The item namespace will be populated with variables from the input data source for evals, and from each dataset item for fine-tuning. For example, if a row contains the following

```json

{

"reference_answer": "..."

}

```

This can be used within the grader as `{{ item.reference_answer }}`.

### Sample namespace

The sample namespace will be populated with variables from the model sampling step during evals or during the fine-tuning step. The following variables are included

- `output_text`, the model output content as a string.

- `output_json`, the model output content as a JSON object, only if `response_format` is included in the sample.

- `output_tools`, the model output `tool_calls`, which have the same structure as output tool calls in the [chat completions API](https://developers.openai.com/api/docs/api-reference/chat/object).

- `choices`, the output choices, which has the same structure as output choices in the [chat completions API](https://developers.openai.com/api/docs/api-reference/chat/object).

- `output_audio`, the model audio output object containing Base64-encoded `data` and a `transcript`.

For example, to access the model output content as a string, `{{ sample.output_text }}` can be used within the grader.

Details on grading tool calls

When training a model to improve tool-calling behavior, you will need to write your grader to operate over the `sample.output_tools` variable. The contents of this variable will be the same as the contents of the `response.choices[0].message.tool_calls` ([see function calling docs](https://developers.openai.com/api/docs/guides/function-calling?api-mode=chat)).