$ npm install -g @openai/codex@0.125.0View details

New Features

- App-server integrations now support Unix socket transport, pagination-friendly resume/fork, sticky environments, and remote thread config/store plumbing. (#18255, #18892, #18897, #18908, #19008, #19014)

- App-server plugin management can install remote plugins and upgrade configured marketplaces. (#18917, #19074)

- Permission profiles now round-trip across TUI sessions, user turns, MCP sandbox state, shell escalation, and app-server APIs. (#18284, #18285, #18286, #18287, #19231)

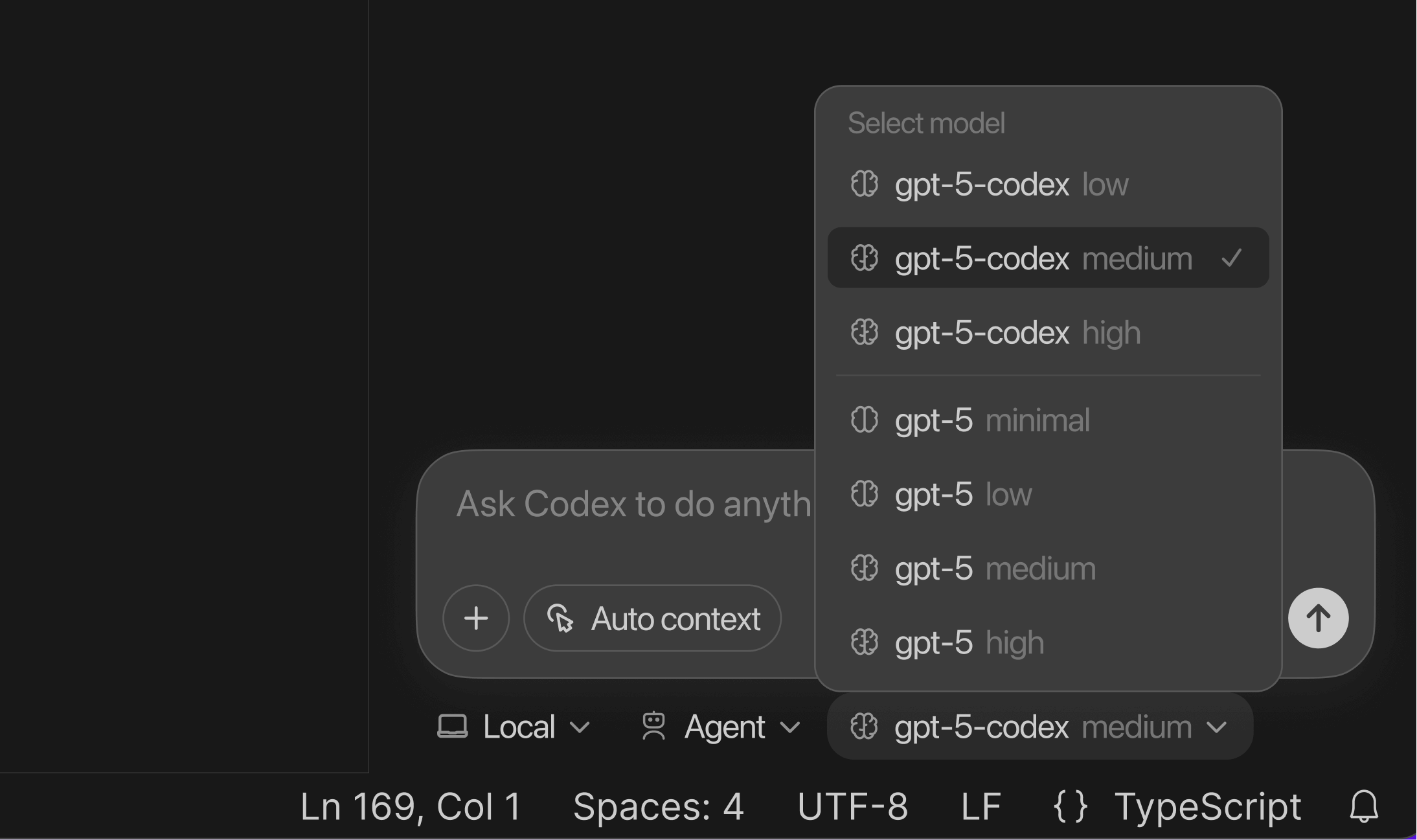

- Model providers now own model discovery, with AWS/Bedrock account state exposed to app clients. (#18950, #19048)

codex exec --jsonnow reports reasoning-token usage for programmatic consumers. (#19308)- Rollout tracing now records tool, code-mode, session, and multi-agent relationships, with a debug reducer command for inspection. (#18878, #18879, #18880)

Bug Fixes

- Interrupting

/reviewand exiting the TUI no longer leaves the interface wedged on delegate startup or unsubscribe. (#18921) - Exec-server no longer drops buffered output after process exit and now waits correctly for stream closure. (#18946, #19130)

- App-server now respects explicitly untrusted project config instead of auto-persisting trust. (#18626)

- WebSocket app-server clients are less likely to disconnect during bursts of turn and tool-output notifications. (#19246)

- Windows sandbox startup handles multiple CLI versions and installed app directories better, and background

Start-Processcalls avoid visible PowerShell windows. (#19044, #19180, #19214) - Config/schema handling now rejects conflicting MultiAgentV2 thread limits, resolves relative agent-role config paths, hides unsupported MCP bearer-token fields, and rejects invalid

js_replimage MIME types. (#19129, #19261, #19294, #19292)

Documentation

- App-server docs and generated schemas were refreshed for the new transport, thread, marketplace, sticky environment, and permission-profile APIs. (#18255, #18897, #19014, #19074, #19231)

- Rollout-trace documentation now covers the debug trace reduction workflow. (#18880)

Chores

- Refreshed

models.jsonand related core, app-server, SDK, and TUI fixtures for the latest model catalog and reasoning defaults. (#19323) - Windows Bazel CI now uses a stable PATH and shared query startup path for better cache reuse. (#19161, #19232)

- Plugin marketplace add/remove/startup-sync internals moved out of

codex-core, and curated plugin cache versions now use short SHAs. (#19099, #19095) - Reverted a macOS signing entitlement change after it caused alpha startup failures. (#19167, #19350)

- Stabilized flaky approval-popup and plugin MCP tool-discovery tests. (#19178, #19191)

Changelog

Full Changelog: rust-v0.124.0...rust-v0.125.0

- #19129 Reject agents.max_threads with multi_agent_v2 @jif-oai

- #19130 exec-server: wait for close after observed exit @jif-oai

- #19149 Update safety check wording @etraut-openai

- #18284 tui: sync session permission profiles @bolinfest

- #18710 [codex] Fix plugin marketplace help usage @xli-oai

- #19127 feat: drop spawned-agent context instructions @jif-oai

- #18892 Add remote thread config loader protos @rasmusrygaard

- #19014 Add excludeTurns parameter to thread/resume and thread/fork @ddr-oai

- #18882 [codex] Route live thread writes through ThreadStore @wiltzius-openai

- #19008 [codex] Implement remote thread store methods @wiltzius-openai

- #18626 Respect explicit untrusted project config @etraut-openai

- #18255 app-server: add Unix socket transport @euroelessar

- #19167 ci: add macOS keychain entitlements @euroelessar

- #19099 Move marketplace add/remove and startup sync out of core. @xl-openai

- #19168 Use Auto-review wording for fallback rationale @maja-openai

- #18908 Add remote thread config endpoint @rasmusrygaard

- #18285 tui: carry permission profiles on user turns @bolinfest

- #18286 mcp: include permission profiles in sandbox state @bolinfest

- #18878 [rollout_trace] Trace tool and code-mode boundaries @cassirer-openai

- #18287 shell-escalation: carry resolved permission profiles @bolinfest

- #18946 fix(exec-server): retain output until streams close @bolinfest

- #19074 Add app-server marketplace upgrade RPC @xli-oai

- #19180 use a version-specific suffix for command runner binary in .sandbox-bin @iceweasel-oai

- #19178 Stabilize approvals popup disabled-row test @etraut-openai

- #18921 Fix /review interrupt and TUI exit wedges @etraut-openai

- #19191 Stabilize plugin MCP tools test @etraut-openai

- #19194 Mark hooks schema fixtures as generated @abhinav-oai

- #18288 tests: isolate approval fixtures from host rules @bolinfest

- #19044 guide Windows to use -WindowStyle Hidden for Start-Process calls @iceweasel-oai

- #19214 do not attempt ACLs on installed codex dir @iceweasel-oai

- #19161 ci: derive cache-stable Windows Bazel PATH @bolinfest

- #18811 refactor: route Codex auth through AuthProvider @efrazer-oai

- #19246 Increase app-server WebSocket outbound buffer @etraut-openai

- #19048 feat: expose AWS account state from account/read @celia-oai

- #18880 [rollout_trace] Add debug trace reduction command @cassirer-openai

- #18897 Add sticky environment API and thread state @starr-openai

- #18879 [rollout_trace] Trace sessions and multi-agent edges @cassirer-openai

- #19095 feat: Use short SHA versions for curated plugin cache entries @xl-openai

- #18950 feat: let model providers own model discovery @celia-oai

- #19206 app-server: persist device key bindings in sqlite @euroelessar

- #18917 [codex] Support remote plugin install writes @xli-oai

- #19231 permissions: make profiles represent enforcement @bolinfest

- #19261 Resolve relative agent role config paths from layers @etraut-openai

- #19232 ci: reuse Bazel CI startup for target-discovery queries @bolinfest

- #19292 Reject unsupported js_repl image MIME types @etraut-openai

- #19247 chore: apply truncation policy to unified_exec @sayan-oai

- #19294 Hide unsupported MCP bearer_token from config schema @etraut-openai

- #19308 Surface reasoning tokens in exec JSON usage @etraut-openai

- #19323 Update models.json and related fixtures @sayan-oai

- #19350 fix alpha build @jif-oai